Modern biological research is witnessing a profound transformation as the scientific community shifts its focus from the static blueprint of the genome to the dynamic, functional reality of the proteome. While the genetic code provides the fundamental instructions for life, it is the proteins themselves that serve as the labor force, executing the complex chemical reactions and structural tasks necessary for survival. This transition is highlighted in a comprehensive review within the Annual Review of Analytical Chemistry, which outlines a movement toward high-resolution, single-molecule detection. By moving beyond bulk analysis, researchers are finally gaining the ability to observe the molecular machinery in its native state, a necessity for understanding the nuances of human health. Because proteins act as the primary drivers of biological processes, this evolution in proteomics is essential for identifying the precise mechanisms of disease progression and developing the next generation of targeted medical interventions.

For over a century, molecular biology was largely dominated by genomics, primarily because DNA is a stable template that can be easily manipulated and amplified using established methods like the Polymerase Chain Reaction. In contrast, the proteome is incredibly diverse and constantly changing, utilizing a complex alphabet of twenty amino acids compared to the four chemical bases found in DNA. Unlike genetic material, proteins cannot be amplified, which has historically made them difficult to analyze, especially when present in low concentrations. The current shift toward proteomics acknowledges that the genome alone is insufficient to explain the full complexity of life, as the actual physiological state of a cell is determined by proteins that respond in real-time to internal and external stimuli. This realization has catalyzed the development of new tools capable of capturing the intricate dance of molecules that define life at its most fundamental level.

Overcoming the Limitations of Traditional Analysis

The Complexity of Proteoforms and the Mass Spectrometry Gap

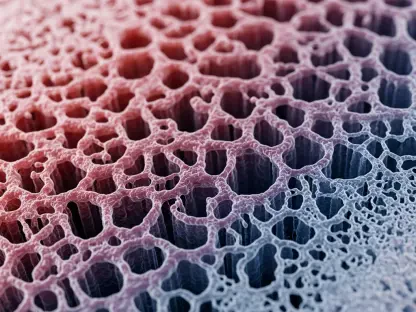

A critical revelation in contemporary biology is that a single gene does not simply produce a single protein; rather, it serves as a template for a vast array of variations known as proteoforms. These variations arise through processes such as alternative splicing and post-translational modifications, which can drastically alter a protein’s function and localization within the cell. These subtle molecular differences are frequently the primary drivers of complex conditions, including various forms of cancer and neurodegenerative disorders like Alzheimer’s disease. Unfortunately, traditional analytical methods often miss these crucial details. While mass spectrometry has served as the gold standard for protein identification for many years, it generally relies on breaking proteins into peptides, a process that can obscure the original identity and specific modifications of the full-length molecule, leading to significant gaps in our understanding.

The inherent limitations of mass spectrometry become particularly evident when researchers attempt to analyze low-abundance proteins that may be early indicators of disease. Mass spectrometry is a bulk analysis technique that often lacks the sensitivity required to detect the “needle in the haystack” molecules that exist at the edges of biological detection. Furthermore, the complexity of modern samples often results in ion suppression, where more abundant proteins drown out the signals of rarer, more informative species. This creates a significant blind spot in molecular biology, preventing scientists from fully mapping the interactions that lead to cellular dysfunction. By failing to provide complete sequence coverage for every protein in a sample, traditional methods leave researchers with a fragmented view of the proteome, underscoring the urgent need for single-molecule technologies that can provide a comprehensive and uninterrupted look at protein diversity and structure.

Innovation in Fluorescence-Based Detection

To address these analytical shortcomings, several pioneering platforms are now utilizing fluorescence-based technologies to achieve unprecedented single-molecule sensitivity. A prominent example is the work being done by Quantum-Si, which has developed semiconductor chips designed to monitor how specific “recognizer” molecules bind to the ends of protein chains. This approach allows for the identification of proteins by observing the unique kinetic signatures of these interactions in real-time. This technology has progressed to the point where it can recognize the majority of amino acids, covering a massive portion of the human proteome with remarkable precision. By shifting the analytical focus from large batches of blended cellular material to individual molecules, these light-based systems offer a level of resolution that traditional bulk methods simply cannot match, providing a clearer picture of molecular behavior.

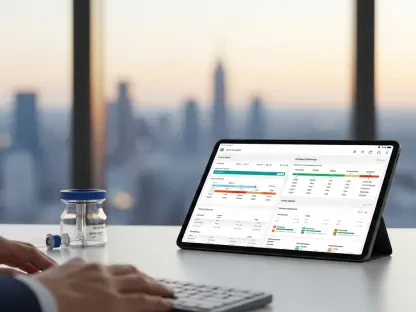

Beyond mere identification, these fluorescence-based systems are enabling researchers to observe the dynamic binding events that define protein function. By utilizing advanced optics and microfluidic chips, these platforms can track the presence of specific modifications on a single protein strand, such as phosphorylation or glycosylation, which are often the triggers for biological activity. This capability is particularly transformative for drug discovery, as it allows scientists to see exactly how a therapeutic compound interacts with its target protein at the individual level. As these systems become more integrated into standard laboratory workflows, they are expected to reduce the time and cost associated with protein sequencing. The ability to perform high-sensitivity detection without the need for the massive infrastructure required by traditional mass spectrometry is democratizing access to deep proteomic insights across the global scientific community.

Advanced Sequencing and Mapping Techniques

High-Sensitivity Fluorosequencing and Iterative Mapping

Other innovative approaches, such as fluorosequencing, are pushing the boundaries of what is possible by labeling specific amino acids and removing them sequentially to determine a protein’s identity. This method relies on the principle that even a partial map of a protein’s sequence can be compared against a database to uniquely identify the molecule. Research indicates that labeling just four specific amino acids is often enough to identify approximately 95% of the proteins found in the human body. This high-efficiency approach minimizes the chemical complexity of the process while maximizing the data output. Additionally, iterative mapping techniques are being employed to quantify various proteoforms at the single-molecule level. These methods involve the repeated application of binders to a protein, building a detailed profile of its surface and modifications through successive cycles of observation.

The practical applications of these mapping techniques are especially evident in the study of neurodegenerative diseases, where the protein tau plays a central role. In conditions like Alzheimer’s, the tau protein undergoes specific phosphorylation patterns that lead to the formation of toxic aggregates in the brain. Identifying these specific patterns is essential for understanding how the brain degrades and for developing interventions that can stop the process. Traditional methods struggle to distinguish between the many different versions of tau present in a single sample, but iterative mapping allows researchers to see each individual proteoform in high definition. By providing a detailed map of these modifications, scientists can begin to correlate specific molecular signatures with different stages of disease progression, opening the door for more accurate diagnostics and the development of therapies that target the underlying cause of neural decline.

The Potential of Nanopore Proteomics

Inspired by the success of nanopore DNA sequencing, nanopore proteomics involves threading a linearized protein through a microscopic hole in a membrane. As the protein passes through the pore, it disrupts an electric current in a way that is characteristic of the specific amino acids passing through at that moment. This disruption creates a unique electronic signature, effectively “reading” the protein sequence as it moves. To manage the speed of the protein—which would otherwise move too quickly for accurate detection—researchers employ molecular motors to pull the molecule through the pore at a controlled rate. This technique represents a radical departure from traditional chemical sequencing, offering a purely physical method of detection that could theoretically read entire protein chains from end to end without the need for extensive sample preparation or labeling.

While achieving high accuracy was initially a major hurdle for nanopore technology, new “rereading” techniques have significantly improved the reliability of the data. By passing the same protein molecule through the pore multiple times, researchers can average the signals to eliminate noise and increase the precision of the amino acid identification. This iterative process has allowed for the distinction of all twenty proteinogenic amino acids, a feat once thought to be nearly impossible for electronic sensors. Furthermore, the development of specialized “pore proteins” and solid-state membranes is expanding the versatility of this technology, allowing it to handle proteins of various sizes and charge profiles. As these sensors become more refined, they hold the potential to provide real-time, portable protein sequencing, which could revolutionize everything from bedside diagnostics to environmental monitoring in the field.

The Future of Molecular Diagnostics

Complementary Roles in Modern Research

As next-generation proteomics methods approach full commercial readiness, it is becoming clear that they are intended to complement rather than replace existing technologies like mass spectrometry. Mass spectrometry remains a powerful and essential tool for high-throughput bulk analysis, where the goal is to get a broad overview of the thousands of proteins present in a complex biological sample. However, single-molecule methods offer the extreme precision and sensitivity needed to find the rare, low-abundance proteoforms that are often the actual triggers of pathological changes. By using these two approaches in tandem, scientists can maintain a global perspective on protein expression while simultaneously diving deep into the specific molecular variations that define an individual’s health status. This hybrid strategy ensures that no detail is lost, from the most abundant structural proteins to the rarest signaling molecules.

The integration of these diverse technologies is leading to a more holistic understanding of biological systems. For instance, a researcher might use mass spectrometry to identify a general increase in protein activity within a tumor sample and then employ single-molecule sequencing to pinpoint the exact proteoform responsible for drug resistance. This layered approach allows for a more efficient diagnostic process, where general trends are identified quickly and specific causes are investigated with high-resolution tools. Moreover, the data generated by these next-generation platforms is increasingly being analyzed using advanced machine learning algorithms, which can recognize patterns in protein behavior that are invisible to the human eye. This synergy between hardware innovation and computational power is accelerating the pace of discovery, making it possible to translate complex molecular data into actionable clinical insights faster than ever before.

A New Era for Biological Discovery

The transition to single-molecule proteomics is viewed as an inevitable and necessary step in the evolution of biological research. Despite remaining hurdles, such as the technical difficulty of applying fluorescent or movement-facilitating tags to proteins on a massive scale, the field is moving toward a high-definition view of life’s molecular variations. This deeper understanding of the cellular machinery promises to revolutionize the fields of drug discovery and personalized medicine. By finally reading the complex, nuanced language of proteins in its native form, researchers are poised to unlock new treatments for some of the most challenging diseases facing humanity. The ability to see the proteome with such clarity will allow for the design of drugs that are more effective and have fewer side effects, as they will be tailored to the specific molecular reality of the patient.

Moving forward, the primary focus for the scientific community should be the standardization of these single-molecule workflows to ensure reproducibility across different laboratories and clinical settings. Establishing robust protocols for sample preparation and data interpretation will be essential for the widespread adoption of these technologies. Additionally, interdisciplinary collaboration between chemists, engineers, and clinicians will be vital for refining the hardware and identifying the most pressing medical applications. As these tools become more accessible, the focus will likely shift toward longitudinal studies that track changes in the proteome over time, providing a window into how lifestyle, environment, and aging affect our molecular health. By embracing these next-generation tools, society can move toward a future where disease is caught at its very earliest molecular inception, long before physical symptoms appear, fundamentally changing the nature of healthcare.