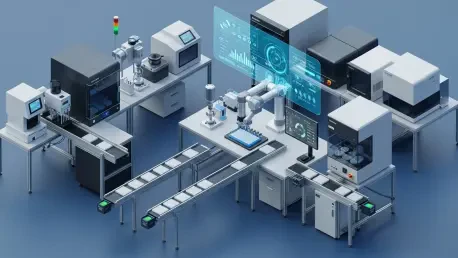

The relentless pursuit of novel therapeutic agents has reached a critical juncture where the sheer volume of potential chemical and genetic interactions far exceeds the capacity of traditional laboratory methodologies. In the modern pharmaceutical landscape, High-Throughput Screening (HTS) serves as the primary engine for discovery, utilizing a sophisticated convergence of robotics, precision fluidics, and high-speed data analytics to interrogate biological targets with unprecedented efficiency. These automated platforms are no longer viewed merely as specialized tools but as comprehensive ecosystems that define the operational rhythm of the 21st-century laboratory. By shifting the burden of repetitive execution from the researcher to an integrated machine architecture, these systems eliminate the inherent variability of human intervention while maintaining a pace of experimentation that can screen hundreds of thousands of compounds in a single, continuous campaign. The complexity of these systems necessitates a deep dive into their underlying structural components, starting from the physical movement of microplates to the invisible software logic that governs every nanoliter of liquid dispensed.

Systematic Integration and Robotic Frameworks

The structural integrity of a screening campaign depends entirely on the seamless orchestration of hardware modules that must communicate with millisecond precision to avoid catastrophic failure. At the center of this coordination is the centralized control system, a digital nervous system that tracks the movement of microplates across various stations while maintaining a strict audit trail for every well. In a high-pressure environment, a minor desynchronization between a robotic gripper and a storage incubator can lead to the loss of precious samples or, perhaps more damagingly, the creation of silent errors in data attribution. This integration layer ensures that as a plate moves from a storage hotel to a liquid handler and eventually to a detector, its identity and environmental history remain perfectly intact. This level of cohesion is what allows large-scale operations to maintain their throughput without sacrificing the high data quality required for regulatory filing or downstream hit validation, essentially turning the laboratory into a high-precision manufacturing plant for scientific knowledge.

Robotic hardware provides the physical force required to sustain these operations, utilizing multi-axis arms and high-speed conveyor systems to navigate the laboratory floor. These robotic units must be engineered for extreme reliability, as they are often required to operate twenty-four hours a day during intensive screening cycles where downtime can derail a multi-million-dollar project. Beyond mere speed, modern robotics must possess a high degree of flexibility to accommodate shifting experimental needs, such as moving between standard 96-well formats and the highly miniaturized 1536-well plates that have become the industry standard for cost reduction. The design of the robotic workspace also requires careful consideration of the physical footprint, ensuring that high-speed movements do not introduce vibrations that could interfere with sensitive optical readings or liquid dispensing accuracy. As these systems evolve, the emphasis has shifted from brute mechanical power toward intelligent motion planning that optimizes the path of each plate to minimize transit time and maximize the utilization of every instrument in the cluster.

Precision Liquid Handling and Reagent Management

The accuracy of a high-throughput screen is fundamentally limited by the performance of its liquid handling systems, which must dispense microscopic volumes of reagents with surgical precision. Traditional tip-based pipetting systems, while still relevant for larger volume transfers and buffer preparation, are increasingly being supplemented by non-contact technologies that eliminate the risks of cross-contamination and the excessive waste of plastic consumables. Precision in this context is not just about the volume itself but the consistency of that volume across an entire plate of thousands of wells. If a dispenser fails to maintain a low coefficient of variation, the resulting “noise” in the biological data can easily drown out the signal of a potential drug candidate, leading to missed opportunities or the pursuit of false positives that waste years of secondary research. Consequently, the management of fluid dynamics has become a core competency for researchers who must balance the physical properties of complex reagents with the mechanical limitations of the dispensing hardware.

Leading-edge platforms have increasingly adopted acoustic droplet ejection (ADE), a technology that uses focused ultrasonic energy to propel nanoliter-sized droplets from a source plate to a destination plate without any physical contact. This approach has revolutionized reagent management by allowing for extreme miniaturization, which is essential when working with rare biological proteins or expensive chemical libraries that are difficult to replenish. By removing the need for disposable tips, ADE systems significantly lower the operational cost of a screen while simultaneously improving the integrity of the data by preventing the adsorption of compounds onto plastic surfaces. This shift toward nanoliter-scale handling requires a sophisticated understanding of plate geometry and liquid surface tension, as the hardware must be precisely calibrated to account for the physical characteristics of different solvents, such as dimethyl sulfoxide (DMSO). The alignment of these liquid handling parameters with the biological requirements of the assay is the most critical step in ensuring that the screening results are both reproducible and biologically relevant.

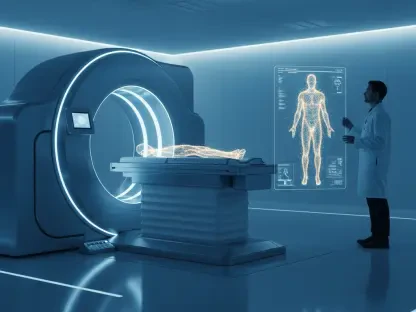

Detection Technologies and Signal Readout

Once the chemical interactions have occurred within the microplate wells, the platform must transition into its analytical phase, where biological responses are converted into quantifiable digital signals. The detection module acts as the “eyes” of the HTS platform, employing a variety of optical modalities such as fluorescence intensity, time-resolved fluorescence, and luminescence to measure the success of a reaction. Speed is of the essence here, as the detector must be able to process plates as quickly as the robotic system can deliver them to avoid creating a bottleneck in the workflow. However, speed cannot come at the expense of sensitivity; the instrument must be capable of detecting minute changes in signal against a backdrop of chemical background noise. High-performance detectors are characterized by a wide dynamic range, allowing them to capture both very weak signals from subtle inhibitors and very strong signals from potent activators without saturating the sensor or losing detail in the noise.

In addition to traditional plate readers, many modern platforms are integrating high-content screening (HCS) capabilities, which use automated microscopy to capture detailed images of cellular morphology and protein localization. This adds a layer of spatial complexity to the data, allowing researchers to see not just if a compound worked, but how it affected the internal structures of the cell. The integration of such complex imaging hardware requires specialized software pipelines that can process terabytes of image data in real-time, extracting meaningful features through advanced computer vision algorithms. This transition from simple numerical readouts to complex phenotypic descriptions represents a significant leap in the quality of information gathered during a screen. The challenge for system designers lies in synchronizing these data-heavy detection events with the overall mechanical flow of the platform, ensuring that the detector is ready for the next plate the moment the previous analysis is finalized, thereby maintaining a steady pulse throughout the duration of the experiment.

Data Infrastructure and Workflow Orchestration

A functioning HTS platform is essentially a massive data factory that produces millions of individual data points alongside a mountain of associated metadata, including plate maps, reagent lot numbers, and environmental conditions. Managing this information requires a robust digital infrastructure that integrates the raw instrument output with a Laboratory Information Management System (LIMS) for long-term storage and analysis. The data management layer must perform complex normalization routines in real-time, adjusting for plate-to-plate variability and calculating statistical parameters like the Z-factor to validate the quality of the screen as it progresses. Without this automated oversight, a laboratory would be buried under the weight of its own output, unable to distinguish between a significant biological discovery and a mechanical fluke. The ability to monitor the “health” of an assay through real-time data visualization allows researchers to intervene early if a drift in performance is detected, potentially saving hundreds of thousands of dollars in wasted reagents.

The overarching logic that governs this entire process is the scheduling software, which serves as the “brain” of the automated platform. This software is responsible for the intricate timing of every movement, ensuring that plates are incubated for the exact number of minutes required before being moved to the next station. Advanced schedulers are capable of dynamic adjustment, meaning they can re-order tasks on the fly if a particular module encounters an error or if a specific plate requires an extra wash step. This level of operational intelligence is what separates a collection of individual machines from a truly integrated platform. By optimizing the “dead time” between processes, the scheduling software maximizes the throughput of the system, allowing for the simultaneous execution of multiple different assays on the same hardware. This orchestration is vital for maintaining the economic viability of the platform, as it ensures that expensive analytical instruments are rarely idle and that the flow of discovery remains constant regardless of the complexity of the screening protocol.

Strategic Design and Future Modular Evolution

The ultimate success of an automated screening platform is determined less by the peak speed of its fastest component and more by the strategic balance of its entire workflow. Identifying and mitigating bottlenecks—such as a slow imaging step that delays a fast liquid handler—is a continuous process of refinement that requires both engineering expertise and biological insight. Laboratories often find themselves choosing between two distinct philosophies: the fully integrated “turn-key” system and the modular, reconfigurable platform. Fully integrated systems are typically optimized for a single, high-volume task and offer the highest levels of consistency and throughput, making them the preferred choice for industrial lead discovery. In contrast, modular systems provide the flexibility needed for academic environments or early-stage research and development, where the nature of the assays might change from week to week. This flexibility allows researchers to pivot quickly in response to new scientific findings, though it often requires a more hands-on approach to system configuration and validation.

As the industry moves forward, the integration of artificial intelligence and machine learning into the HTS workflow is becoming the next frontier of optimization. Future systems will likely move beyond simple scheduling to autonomous decision-making, where the platform analyzes its own data in real-time to determine which compounds should be screened next or which experimental conditions require further optimization. This shift toward “closed-loop” discovery will further reduce the time required to move from a library of unknown compounds to a validated lead. For the contemporary scientist, the challenge is to master these technological layers, ensuring that the automated systems do not become “black boxes” but remain transparent tools for rigorous inquiry. The future of drug discovery lies in the continued refinement of these architectures, pushing the boundaries of miniaturization and intelligence to tackle increasingly complex biological models, such as 3D organoids and patient-derived cell lines, which were previously considered too difficult to screen at scale.

The evolution of automated HTS platforms has transformed the methodology of drug discovery into a high-precision discipline that balances mechanical reliability with deep biological insight. Moving forward, laboratories should prioritize the implementation of adaptive scheduling and real-time quality control analytics to maximize the utility of their existing hardware. The transition toward non-contact liquid handling and high-content imaging should be accelerated to capture more nuanced data while reducing the overall environmental and financial footprint of large-scale screens. As these systems become more autonomous, maintaining a transparent and well-documented data pipeline will be essential for ensuring that machine-generated hits can be successfully translated into clinical candidates. Researchers are encouraged to invest in modular architectures that can be easily updated with the latest detection technologies, ensuring their platforms remain competitive in a rapidly changing technological landscape. Ultimately, the goal is to create a seamless interface between digital logic and biological reality, where the platform serves as a catalyst for breakthrough discoveries that were once hindered by the limitations of the human hand.