The traditional laboratory environment is undergoing a profound metamorphosis as the pharmaceutical industry moves away from manual benchwork toward a future defined by systemic intelligence. Insilico Medicine has catalyzed this shift with the introduction of LabClaw, a sophisticated operating system designed to orchestrate the complex requirements of autonomous drug discovery. Unlike previous iterations of laboratory automation that relied on rigid, pre-programmed instructions to perform repetitive tasks, this system functions as a dynamic intelligence layer. It acts as the critical bridge between digital generative AI predictions and the physical reality of wet-lab experimentation, specifically within the highly advanced LifeStar2 facility. By utilizing an “Agent-Guard” architecture, the platform manages scientific workflows with a level of autonomy that allows human researchers to transcend the limitations of logistical coordination and manual labor. This transition is not merely about speed; it is about creating a “Pharmaceutical Superintelligence” where the hardware and software are harmoniously integrated to understand scientific context, ensuring that every data point generated is immediately actionable and relevant to the drug development pipeline.

This leap in capability addresses a fundamental challenge in modern biotechnology: the vast disconnect between high-speed computational modeling and the slower, more error-prone physical validation process. LabClaw eliminates this friction by serving as the primary brain for a fully automated ecosystem, coordinating everything from initial target identification to the final validation of a drug candidate. By automating the tracking of materials and the execution of complex protocols, the system transforms the laboratory into a self-correcting and highly efficient environment. Scientists are no longer bogged down by the minutiae of pipetting or the administrative burden of scheduling equipment; instead, they are empowered to focus on high-level strategy and the creative aspects of drug design. This shift represents a broader industry trend toward true autonomy, where the laboratory is not just a collection of machines, but an intelligent partner capable of driving research forward with minimal human intervention.

The Three-Pillar Framework for Intelligent Research

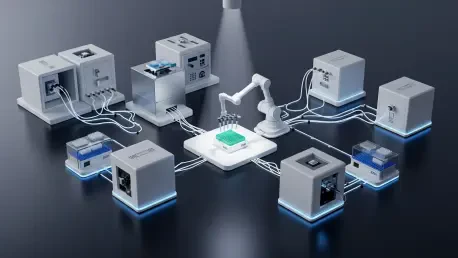

At the core of this autonomous evolution is a three-pillar framework that fundamentally redefines how experiments are conceived and executed in a modern setting. This framework consists of AI thinking, automated execution, and human supervision, creating a unified workflow that operates with unprecedented precision. The first pillar, AI thinking, utilizes generative platforms to analyze massive datasets, identify novel therapeutic targets, and design molecules with optimized properties. Once the digital design is complete, the second pillar, automated execution, takes over. A complex matrix of hardware, including precision robotic arms and advanced liquid handlers, carries out the physical labor of the experiment. This ensures that the transition from a digital concept to a physical sample is seamless, reducing the variability often introduced by manual handling and human error. However, the system is not entirely detached from human insight. The third pillar, human supervision, maintains a “Human-in-the-Loop” mechanism, ensuring that all autonomous actions are vetted against rigorous scientific standards and safety protocols.

This integrated approach establishes a powerful closed-loop system that spans the entire drug discovery spectrum, from the discovery of a biological target to the physical generation of reports. Because the digital and physical realms are connected, experimental results are automatically captured and fed back into the AI models in real time. This continuous feedback loop allows the system to refine its predictions based on actual laboratory performance, creating a virtuous cycle of improvement. If a specific compound does not perform as predicted in a phenotypic assay, the AI immediately learns from this failure and adjusts its next round of designs. This drastically reduces the time and capital wasted on unproductive experimental paths, allowing research teams to navigate the chemical space more effectively. By bridging these three pillars, the laboratory becomes a responsive entity that evolves alongside the research project, ensuring that the most promising leads are pursued with maximal efficiency.

Solving the Limitations of Traditional Laboratories

The design of LabClaw is specifically engineered to overcome three primary bottlenecks that have historically hampered the progress of biomedical research: workflow rigidity, data silos, and high coordination costs. Traditional automation is often brittle; if a single component fails or a quality control anomaly occurs, the entire process usually grinds to a halt, requiring a human operator to diagnose and fix the issue. LabClaw solves this through its ability to understand scientific context and dynamically adjust to variables. If a sensor detects a deviation in an environmental parameter or a reagent is depleted, the system can autonomously reroute the workflow or pause specific modules without compromising the entire experiment. This adaptability ensures that the facility remains operational around the clock, significantly increasing the throughput of drug discovery projects and reducing the downtime associated with manual troubleshooting.

Furthermore, the platform addresses the pervasive issue of data silos by creating a unified environment where information flows freely between disparate pieces of equipment. In many modern labs, the data generated by a plate reader might remain isolated from the system used for sample preparation, requiring manual data entry and analysis. LabClaw eliminates these barriers by automatically interpreting results from various hardware components and integrating them into a centralized database. This also extends to the physical movement of materials within the lab. Even in “automated” facilities, moving samples between different functional “islands,” such as moving a plate from an incubator to an imager, often necessitates human intervention. LabClaw manages this logistics gap by orchestrating Automated Guided Vehicles that transport materials autonomously throughout the LifeStar2 facility. By removing these administrative and logistical hurdles, the system allows the research environment to function as a singular, cohesive machine dedicated to scientific discovery.

The Specialized Roles of the Agent-Guard System

The technical sophistication of the platform is rooted in its “Agent-Guard” architecture, which employs five specialized AI agents to manage different facets of the laboratory ecosystem. The Science Analyst agent is responsible for the intellectual heavy lifting, such as reviewing the latest scientific literature, analyzing target novelty scores, and assessing the competitive landscape. This is complemented by the Experiment Coordinator, which manages the logistics of the research plan, ensuring that all steps of a protocol are correctly sequenced and scheduled. The Orchestration Expert facilitates the technical communication between different brands of laboratory hardware, ensuring that the robotic arms, liquid handlers, and analytical instruments work in perfect synchronization. Meanwhile, the QC Inspector monitors environmental parameters and experimental states in real time, and the Data Specialist handles the ingestion and processing of experimental output, ensuring that every byte of data is accurately captured and analyzed.

This multi-agent system operates within a framework of “Guards,” which are pre-defined safety and compliance boundaries that prevent the AI from making unauthorized or unsafe decisions. While the agents have the freedom to optimize workflows and find the most efficient path to an experimental goal, they must operate within these established protocols. This structure provides a unique balance between autonomy and control. If the system encounters a situation that falls outside its pre-defined parameters—such as a significant deviation in a biological assay’s results—it automatically triggers a request for human validation. This ensures that the system remains a supportive tool for scientists rather than an unsupervised replacement. By distributing the workload among specialized agents, the platform achieves a level of operational complexity that would be impossible for a human team to manage manually, all while maintaining the highest standards of data integrity and safety.

Natural Language Interaction and Modular Design

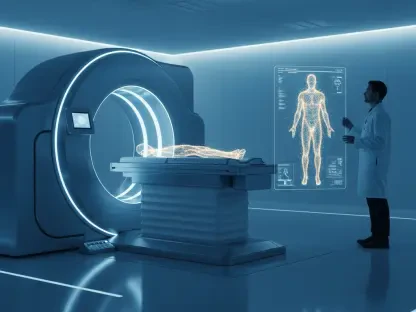

To make this high-level technology accessible to the broader scientific community, the platform features a conversational interface that allows researchers to interact with the laboratory using natural language. Instead of requiring expertise in complex programming languages or robotics coding, a scientist can simply state a research objective, such as performing a CRISPR-based biomarker validation. The AI agents then autonomously interpret this high-level command, determine the necessary reagents, schedule the appropriate equipment, and set up the data analysis parameters. This democratization of laboratory technology means that researchers can leverage the full power of an autonomous facility without needing to be experts in the underlying hardware. This shift significantly lowers the barrier to entry for complex experiments, allowing biologists and chemists to focus on the scientific implications of their work rather than the technicalities of the equipment.

The system’s modularity further enhances its utility, utilizing a “Lego-like” architecture where twenty different experimental steps can be combined to form various protocols. These modules cover everything from initial sample preparation to final reporting, allowing for the rapid assembly of custom workflows. To accelerate this process, the system includes pre-configured templates for common tasks such as high-throughput screening and phenotypic safety assessments. A recent case study involving anti-aging research demonstrated that a project requiring weeks of manual data gathering and experimental work could be reduced to mere minutes of setup time. By handling the foundational aspects of the experiment autonomously, the platform allowed the research team to concentrate on the final three critical decision points of the study. This modular and intuitive approach ensures that the lab is not just a static facility, but a flexible resource that can be quickly reconfigured to meet the changing needs of the pharmaceutical industry.

Future Considerations for Autonomous Research Systems

The transition from manual laboratory practices to intelligent, autonomous cycles marks a definitive turning point for the pharmaceutical industry, offering a path to solve unmet medical needs with greater speed. As these systems continue to evolve, the focus must shift toward the creation of an open and connected ecosystem where multi-laboratory collaboration becomes the standard rather than the exception. Future iterations of this technology should prioritize the standardization of data formats and communication protocols, enabling different autonomous facilities to share insights and experimental results seamlessly. This would allow a discovery made in one part of the world to immediately inform the AI models in another, creating a global network of “Pharmaceutical Superintelligence.” Organizations should begin investing in the digital infrastructure necessary to support these autonomous systems, ensuring that their data pipelines are robust enough to handle the massive influx of information generated by high-throughput, autonomous labs.

Beyond technical integration, the industry must also address the changing role of the scientist within this new paradigm. As machines take over the execution of experiments, the value of human researchers will increasingly reside in their ability to generate high-level hypotheses and provide strategic direction. Training programs should be updated to focus on the management of AI agents and the interpretation of complex, multi-dimensional datasets. The goal is not to replace the scientist, but to augment their capabilities with tools that handle the repetitive and logistical aspects of research. By adopting these autonomous platforms, research institutions can ensure they remain competitive in an environment where the speed of discovery is the primary differentiator. Ultimately, the successful integration of systems like LabClaw will depend on a balanced approach that combines the precision of autonomous machines with the creative and ethical judgment of the human mind, leading to a more efficient and productive era of drug development.