The moment a patient walks into a clinic with a collection of non-specific complaints like fatigue and joint pain, a complex cognitive process begins for the physician, one that requires balancing intuition with evidence. In this high-stakes environment, the integration of generative artificial intelligence has often been presented as a panacea for diagnostic errors, yet the reality observed in 2026 is far more nuanced and cautious. A landmark investigation by the MESH Incubator at Mass General Brigham, appearing in JAMA Network Open, has unveiled a critical flaw in how 21 different large language models approach these medical mysteries. While these systems frequently reach the correct final conclusion when presented with a complete data set, they consistently falter during the preliminary stages of investigation. This discrepancy highlights a fundamental reasoning gap that prevents artificial intelligence from functioning as a truly independent clinical partner. Without the ability to ask the right questions early on, these digital tools remain more like sophisticated reference books than active medical thinkers.

Evaluating Diagnostic Competence Through the PrIME-LLM Framework

The Mechanics: Simulating the Stages of Clinical Engagement

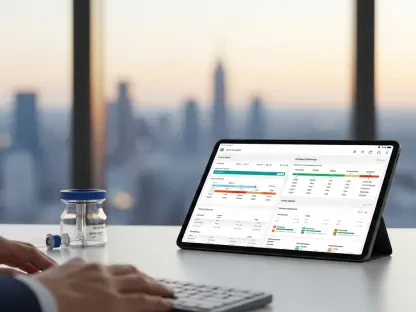

The researchers developed a specialized evaluation tool known as the PrIME-LLM framework to dissect the specific stages where artificial intelligence might succeed or fail within a clinical encounter. This metric does not simply look at whether a model gets the right answer at the end; instead, it breaks the diagnostic process down into four distinct competencies: generating a differential diagnosis, selecting the most appropriate diagnostic tests, arriving at the final diagnosis, and determining a comprehensive treatment plan. By using this granular approach, the study moved beyond the binary right or wrong assessment that characterized earlier AI research. The framework forces the model to display its work, revealing whether it is actually reasoning through the clinical data or simply guessing based on statistical probabilities found in its training data. This level of scrutiny is essential for determining if a model can safely guide a physician through the complexities of a rare or atypical patient presentation.

To make the testing environment as realistic as possible, the study participants fed patient information into the models incrementally, mirroring the way data naturally surfaces in a hospital setting. Initially, the models were only given basic demographics and the patient’s chief complaint, followed by the results of physical examinations and, eventually, specialized laboratory or imaging reports. This chronological unfolding of the case revealed that large language models often struggle when information is sparse. In the early stages, where a human doctor uses experience to narrow down a massive list of possibilities, the AI often failed to produce a clinically relevant differential diagnosis. This finding is particularly concerning because the initial workup is often the most critical phase for preventing diagnostic delay. If the model cannot properly evaluate the early signs, its eventual success in identifying the disease once all the evidence is laid out becomes far less valuable in a live clinical environment.

The Results: Analyzing the Patterns of Decision-Making Failure

One of the most striking findings from the Mass General Brigham study was the sheer magnitude of the performance drop-off during the investigative phase. When the models were provided with a comprehensive case summary that included all test results and specialist notes, they achieved a correct final diagnosis in over 90% of instances. However, the exact same models failed to provide an appropriate or helpful differential diagnosis in more than 80% of cases when presented with only the initial symptoms. This suggests that the current generation of large language models acts as a high-powered pattern matcher rather than a logical reasoner. They can see the answer once the clues are fully assembled, much like a person recognizing a completed jigsaw puzzle, but they cannot yet assemble those pieces themselves when the path is unclear. For medical professionals, this highlights a dangerous limitation: the AI is most effective only when the hardest part of the intellectual labor has already been performed by a human.

The research evaluated a broad spectrum of the most prominent models currently in use, including various iterations of ChatGPT, Claude, Gemini, and Grok. While the study noted that newer versions showed incremental progress—with PrIME-LLM scores rising from the low 60s to the high 70s—no model reached the threshold of proficiency required for unsupervised clinical use. Even the most advanced systems demonstrated a tendency to skip critical steps in the reasoning process or to suggest diagnostic tests that were irrelevant to the symptoms at hand. This indicates that while the raw processing power of these models is increasing, their underlying architecture still lacks the formal logic required for the art of medicine. Healthcare systems must recognize that a higher accuracy rate in final diagnosis does not equate to a safer or more reliable diagnostic process. The gap remains wide between a tool that assists with documentation and one that can safely navigate the uncertainty of a patient’s first visit.

Strategies for Safe AI Implementation in Modern Hospitals

Oversight: Defining the Role of the Human in the Loop

Given the results of the PrIME-LLM evaluation, the immediate consensus among healthcare leaders is that artificial intelligence must remain an augmentative tool rather than an autonomous one. The concept of the human in the loop is no longer just a safety recommendation; it is a clinical necessity for any hospital integrating these models into their workflow in 2026. Physicians are uniquely trained to handle the ambiguity and nuances of patient communication, which often involves filtering out irrelevant information and identifying subtle physical cues that an AI cannot perceive. By positioning the AI as a support system that offers suggestions for the doctor to review, medical institutions can leverage the speed of the software while maintaining the rigorous oversight of a trained professional. This collaborative approach ensures that the reasoning gap identified in the study is filled by human expertise, preventing the model’s logical errors from reaching the patient and potentially causing diagnostic harm.

Furthermore, the integration of AI into clinical practice requires a fundamental shift in how medical professionals are trained to interact with digital assistants. Instead of treating the model’s output as a definitive answer, clinicians must be taught to critically evaluate the logic path the AI provides. The study’s findings emphasize that the current utility of these models lies in their ability to offer a broad range of possibilities that a tired or stressed physician might have overlooked. However, the responsibility for narrowing those possibilities and choosing the correct course of action remains firmly with the medical staff. This partnership allows for a more robust diagnostic process, where the AI serves as a check against cognitive biases while the human provides the necessary context and ethical judgment. As long as these models struggle with the early stages of clinical reasoning, the primary goal of their implementation should be to reduce the physician’s cognitive load rather than to replace their specialized decision-making.

Certification: Establishing Rigorous Standards for Model Deployment

As healthcare systems look toward the future, the development of standardized benchmarks like PrIME-LLM will be vital for ensuring the safety and efficacy of any AI tool introduced at the bedside. Hospital administrators and technology developers must move away from generic marketing claims and focus on specific performance metrics that reflect real-world clinical challenges. This involves testing models on their ability to handle incomplete data, contradictory test results, and the evolving nature of a patient’s condition over time. By establishing a rigorous certification process based on these reasoning-focused metrics, the medical community can create a clear hierarchy of models, identifying which are suitable for administrative tasks and which are robust enough for clinical decision support. This proactive stance on regulation and benchmarking is the only way to build the trust necessary for wide-scale adoption among medical professionals who are understandably skeptical of unproven technology.

The path forward for AI in medicine from 2026 to 2028 will likely involve a move toward small language models that are trained on highly curated, domain-specific medical data. These specialized systems could potentially close the reasoning gap more effectively than the general-purpose models tested in the Mass General Brigham study. By focusing the training on high-quality clinical reasoning examples rather than the entirety of the internet, developers may be able to instill a more disciplined logic within the software. Additionally, the integration of multi-modal capabilities—where the AI can simultaneously analyze text, medical imaging, and real-time biometric data—could provide a more holistic view of the patient, helping the model to navigate the early stages of a workup with greater accuracy. Until these advancements are fully realized and validated through clinical trials, the medical community must remain vigilant, treating every AI-generated suggestion as a hypothesis that requires thorough verification by a human expert.

The recent evaluation of large language models highlighted that the transition from pattern recognition to clinical reasoning was far from complete. To address these findings, medical institutions needed to implement structured protocols that prioritized human oversight in all diagnostic workflows involving generative software. Hospital leadership took the necessary steps to integrate PrIME-LLM or similar rigorous benchmarks into their procurement processes, ensuring that only models with proven reasoning capabilities reached the clinical floor. Developers shifted their focus toward creating more transparent systems that displayed their logical progression, allowing doctors to spot errors in the early differential stages before they led to incorrect testing. This period of cautious integration fostered a new standard of collaborative intelligence, where the focus was not on the independence of the machine, but on the synergy between human intuition and digital data processing. Ultimately, the industry moved toward a future where AI served as a reliable safety net, catching errors and suggesting alternatives, while the definitive art of healing remained a human endeavor.