Ivan Kairatov is a leading biopharma expert with a deep specialization in the intersection of artificial intelligence and immunology. With an extensive background in research and development, he has spent years navigating the complexities of how technology can accelerate drug discovery and vaccine production. His recent work focuses on the validation of computational models in clinical settings, ensuring that high-tech simulations translate into tangible outcomes for patients. In this conversation, we explore the potential of meta-learning tools to revolutionize immune response prediction, the critical need for rigorous evaluation frameworks in AI-driven oncology, and the future of personalized medicine.

Meta-learning tools like PanPep aim to predict immune responses even when experimental data is scarce. How does this approach simulate scenarios for rare peptides, and what specific steps ensure these models remain accurate when encountering entirely new immune targets?

The beauty of a meta-learning model like PanPep lies in its ability to learn how to learn, which is vital when we are dealing with rare amino acid chains that haven’t been extensively documented in biological databases. To simulate scenarios for these rare peptides, the system analyzes patterns from known interactions and then adapts those insights to predict how an unseen target might bind with a T-cell receptor. The process involves a systematic meta-learning phase where the model is exposed to a variety of small, diverse datasets, allowing it to develop a generalized understanding of binding “rules.” When it encounters a completely new target, it uses a small amount of experimental data—often just a handful of samples—to refine its parameters specifically for that target. This fine-tuning step is what keeps the model from flying blind, ensuring it provides a reliable prediction rather than a generic guess based on unrelated data.

AI tools in drug discovery can produce biased results if they aren’t tested under real-world conditions. What evaluation frameworks are necessary to validate peptide-HLA binding predictions, and how do these safeguards prevent misleading outcomes during the transition from computer simulations to clinical trials?

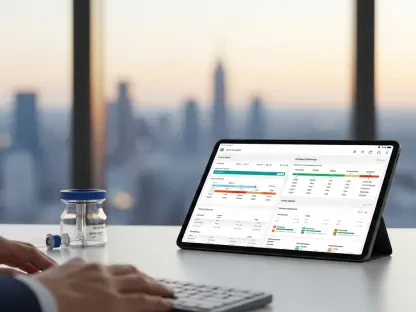

We cannot simply take an AI’s output at face value; we need a rigorous, multi-layered evaluation framework that mirrors the complexity of the human immune system. At the University of South Florida, our team developed a comprehensive testing structure that measures not just basic accuracy, but how well these models generalize across peptide-HLA binding and antigen-driven interactions. We look for metrics that highlight whether a model is over-fitting to existing data or if it truly understands the physical chemistry of the binding event. By subjecting the AI to “out-of-distribution” scenarios—cases where the immune targets are entirely different from the training set—we can identify potential biases before they reach the laboratory. These safeguards are essential because a misleading result in a simulation could lead to a failed clinical trial, wasting years of research and millions of dollars on a therapy that doesn’t actually trigger the intended immune response.

Using AI to simulate oncology screenings could potentially reduce treatment development timelines from years to just a few days. For a patient with late-stage cancer, what are the practical trade-offs of relying on these rapid simulations, and how should doctors balance speed with the need for biological verification?

The most significant trade-off is the tension between the immediate need for a life-saving intervention and the inherent uncertainty of a digital prediction. For a patient with stage-4 cancer, the ability to compress a screening process that usually takes months into just a few days is a literal lifeline, offering hope where traditional timelines might fail. However, we must remain grounded; while these simulations are fast, they are not yet a total replacement for biological verification. Doctors should use these rapid AI insights to prioritize which treatments to test in the lab first, effectively narrowing the “search space” of possible drugs. The goal is to move at the speed of the algorithm while maintaining a safety net of targeted experimental validation to ensure the treatment is both safe and effective for that specific individual.

Identifying the correct “trigger” peptides is essential for activating T-cells to attack a disease. How do computational models help narrow down candidates for laboratory testing, and what specific criteria determine whether a predicted T-cell receptor interaction is reliable enough to justify expensive biological experiments?

Computational models act as a high-throughput filter, sorting through thousands of potential amino acid combinations to find the few that are most likely to alert the immune system. We look for specific reliability criteria, such as the predicted binding affinity and the stability of the peptide-T-cell receptor complex, which are the primary indicators of a successful “trigger.” If the model shows a high probability of a strong, stable interaction, that candidate moves to the top of the list for biological testing. By eliminating the vast majority of non-reactive peptides digitally, we save researchers from the grueling and expensive task of conducting large-scale laboratory assays on candidates that were never going to work. This focused approach ensures that when we finally do invest in a biological experiment, we are doing so with a much higher confidence level.

Adaptive immune cells rely on specific receptors to recognize harmful invaders like toxins or allergens. When dealing with complex antigen-driven interactions, how can researchers use AI to better understand how immune cells ingest and present these markers, and what are the primary hurdles in modeling this process digitally?

We use AI to decode the “presentation” side of the immune equation, specifically how cells break down invaders like viruses or tumor proteins and display those fragments on their surface. By modeling the way antigens are processed and presented, we can predict which specific fragments will be most visible to T and B cells, which is the cornerstone of a targeted defense. The primary hurdle, however, is the sheer variability of human biology; every person has a unique set of receptors, and modeling how an allergen or toxin behaves in every possible genetic context is a massive computational challenge. Furthermore, these interactions are dynamic—immune cells are constantly moving and changing—so capturing that temporal aspect in a static digital model requires incredibly sophisticated algorithms that we are still refining today.

What is your forecast for the integration of AI-guided personalized cancer therapies and vaccines over the next decade?

Over the next ten years, I expect AI-guided therapy to move from an experimental research tool to a standard pillar of clinical oncology. We will likely see a shift where every late-stage cancer patient receives a personalized “immune map” generated by AI within days of their diagnosis, pinpointing the exact peptides needed to train their own immune system to fight back. As our frameworks for validation become more robust, the transition from computer simulation to personalized vaccine production will become seamless and incredibly fast. While we still need to bridge the gap between digital prediction and biological reality, the integration of tools like PanPep will significantly lower the barriers to entry for custom treatments. Ultimately, the next decade will be defined by our ability to move away from “one-size-fits-all” medicine and toward a future where every patient’s treatment is as unique as their own DNA.