The landscape of modern biotechnology is currently defined by a profound transition from the creation of isolated high-performance instruments to the development of fully unified experimental ecosystems. For many years, the pharmaceutical sector prioritized the acquisition of specialized tools that excelled in narrow tasks, yet these systems often operated as disconnected islands of automation, creating significant data silos and manual bottlenecks. This fragmented approach frequently resulted in a “data-rich but insight-poor” environment, where the speed of data generation far outpaced the ability of researchers to integrate and validate findings across different stages of the drug discovery pipeline. The recent shift toward “connectivity” represents a strategic overhaul of this traditional model, emphasizing a frictionless architecture that links complex biological models, robust data infrastructure, and advanced laboratory robotics into a single, cohesive workflow. By prioritizing this integrated framework, the industry is effectively dismantling the walls between early-stage hypothesis testing and large-scale clinical validation, ensuring that a discovery made at the laboratory bench can be rapidly translated into a scalable therapeutic candidate with minimal operational friction.

As laboratories continue to push the boundaries of high-throughput experimentation, the inherent limitations of manual processes have become a central concern for maintaining the integrity of experimental execution. Historically, a technique that proved successful on a small, controlled scale would often encounter significant reliability issues when expanded to process thousands of samples, primarily because human error becomes a statistical certainty at such high volumes. In this context, automation is no longer viewed merely as a luxury for increasing speed, but as a mandatory safeguard for ensuring experimental consistency and reproducibility. The newest generation of platforms, such as integrated liquid handling systems, now enables the consolidation of complex, multi-step sequences—like genomics library preparation—into entirely “hands-free” workflows that operate with a level of precision unattainable by human operators. This evolution is further accelerated by the rise of “accessible automation,” which features intuitive software interfaces that allow biological researchers to design and manage sophisticated robotic protocols without requiring a background in specialized engineering. This democratization of technology ensures that the intellectual capital of a laboratory is focused on high-level data interpretation and strategic decision-making rather than the repetitive physical tasks that traditionally dominated the research day.

Regulatory Standards and Clinical Integration

The migration of automated workflows from the flexible environment of early-stage research into the highly scrutinized realm of clinical trials introduces a demanding set of requirements for transparency and documentation. In the early discovery phase, scientists often value the ability to make rapid, iterative adjustments to their protocols; however, once a process enters the clinical biomarker stage, the priority shifts entirely to absolute traceability and defensibility. Leading organizations have recognized that for automation to survive the rigors of regulatory audits, it must function as a “regulated production system” rather than a mere collection of laboratory tools. This means that every micro-liter of liquid moved and every thermal cycle performed must be digitally recorded and time-stamped, creating an immutable “source of truth” that serves as the foundation for clinical validation. This cultural and technological shift ensures that the automated pipeline is not just a means of generating data, but a verifiable record of scientific integrity that can withstand the intense pressure of global regulatory bodies.

The tangible benefits of adopting these rigorous, end-to-end automated systems are clearly reflected in the dramatic improvements seen in operational outcomes and success rates. For example, prominent collaborations between major technology providers and biotechnology pioneers have demonstrated that implementing highly regulated automation in clinical settings can lead to a staggering sixteen-fold reduction in experimental failure rates. By driving error margins down from 6 percent to less than 0.4 percent, organizations are able to drastically reduce the administrative burden associated with investigating deviations and filing corrective action reports. This level of reliability is transformative for the industry, as it allows for a much smoother path to market by providing regulators with high-confidence datasets that are free from the noise and variability typically associated with manual intervention. Furthermore, the ability to maintain such high standards of consistency across global sites ensures that clinical data remains comparable and robust, regardless of where the physical execution takes place.

Data Quality and the Virtual Cell Initiative

The contemporary drug discovery environment is experiencing a significant shift in perspective regarding the value of data volume, moving away from the “bigger is better” mentality that characterized the early years of the genomic revolution. There is a growing skepticism among data scientists that simply amassing vast, noisy datasets will lead to better predictive models, especially when those datasets lack the biological signal necessary for accurate drug-response modeling. The industry is now pivoting toward a concept known as “scaled volume quality,” which emphasizes the generation of high-signal-to-noise measurements that provide a more granular understanding of cellular behavior. This approach is exemplified by initiatives like the Virtual Cell Pharmacology Initiative, which focuses on using specific techniques such as arrayed sequencing and high-resolution imaging to ensure that every data point can be directly and accurately attributed to a specific drug exposure or genetic perturbation. By prioritizing the quality of the input over the sheer quantity of observations, researchers are building a more reliable foundation for the next generation of predictive algorithms.

This movement toward higher data standards is inextricably linked to a broader commitment to open science and the sharing of standardized datasets across the global research community. Historically, the tendency for pharmaceutical companies to operate in silos led to the repeated discovery of the same errors and the waste of valuable resources on redundant experiments. Today, there is a burgeoning recognition that establishing a common definition of a “virtual cell”—a digital twin that can accurately simulate complex biological responses—requires a collective effort that transcends individual corporate interests. By contributing to shared data repositories and participating in collaborative model development, the industry is moving closer to creating predictive tools that can reliably bypass the traditional, costly cycles of trial-and-error experimentation. This collaborative spirit not only accelerates the pace of innovation but also ensures that the predictive models used to identify new drug targets are based on the most comprehensive and biologically relevant information available to the scientific community.

Bridging Physical Execution and Digital Design

A persistent challenge in modern R&D has been the disconnect between the sophisticated digital tools used to design experiments and the physical infrastructure required to execute them in the real world. While artificial intelligence and machine learning have advanced to the point where they can predict novel molecular structures and optimize complex experimental parameters in seconds, the actual synthesis and testing of these compounds often remain bottlenecked by traditional laboratory methods. To bridge this divide, laboratories are increasingly deploying collaborative robotic platforms that are designed to operate 24/7 alongside human researchers. These systems facilitate a continuous “Design-Make-Test-Analyze” cycle, where the output of a digital design phase is immediately fed into an automated physical system for execution. By ensuring that the speed of physical synthesis, plate handling, and analytical testing matches the pace of digital innovation, companies can dramatically shorten the time it takes to iterate on a promising lead, effectively turning the “virtual world” of AI-driven design into a tangible reality.

Beyond the implementation of robotics, the concept of the “autonomous laboratory” is further expanded by the integration of on-site reagent manufacturing technologies. For years, the speed of discovery was dictated by the lead times of external vendors, particularly in the procurement of custom DNA sequences and oligonucleotides. The advent of enzymatic DNA synthesis technology has changed this dynamic by allowing researchers to “print” their own genetic material directly at the bench. This decentralized manufacturing capability is particularly critical for fast-moving research areas like mRNA therapeutics and synthetic biology, where the ability to move from a sequence design to a physical experiment in a matter of hours provides a massive competitive advantage. By eliminating the logistical delays associated with shipping and external procurement, laboratories are becoming increasingly self-sufficient, allowing for a level of experimental agility that was previously impossible. This integration of on-site production with automated execution represents the final piece of the puzzle in creating a truly autonomous and responsive drug discovery environment.

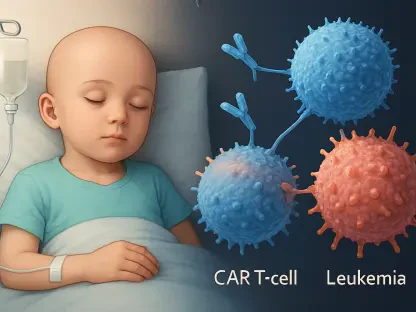

Advancing Human-Relevant Biological Models

The drive to improve the success rate of new drugs is fueling a transition away from traditional animal models toward more sophisticated, human-relevant biological systems. The rise of New Approach Methodologies, which utilize human induced pluripotent stem cells, offers a way to study disease mechanisms and drug toxicities in a context that more accurately reflects human physiology. Technologies such as 3D brain neurospheres and organ-on-a-chip platforms allow researchers to observe complex cellular interactions and systemic responses in a high-throughput format that was once the exclusive domain of simpler, less predictive models. However, the inherent complexity of these advanced biological systems introduces new challenges regarding experimental variability and the need for rigorous standardization. Unlike standardized cell lines, these primary-cell-derived models require precise environmental controls and specialized media to remain viable and consistent, making their integration into large-scale screening efforts a significant technical undertaking.

Successfully standardizing these complex assays is increasingly viewed as a “team sport” that requires deep cooperation between biological researchers, media developers, and instrument manufacturers. The goal is to ensure that a sophisticated organ-on-a-chip model developed in one laboratory can be reproduced with identical results in another facility halfway across the world. This necessitates the creation of standardized protocols for cell differentiation, maintenance, and data acquisition, as well as the development of specialized automated hardware that can handle the delicate nature of 3D biological structures. While the cost and complexity of managing these human-relevant models are higher than traditional methods, the industry consensus is that their superior predictive power makes them an essential investment. By determining therapeutic safety and efficacy with greater precision before human trials even begin, the industry can significantly reduce the risk of late-stage clinical failures, ultimately leading to a more efficient and ethical drug development process.

Translating Integrated Platforms into Clinical Success

The ultimate validation of these integrated discovery platforms is their ability to successfully transition a biological hypothesis into a clinical reality. A prime example of this success can be seen in recent programs targeting genomic and chromosomal instability, particularly those focusing on proteins like KIF18A. By utilizing a highly coordinated approach that blends deep biological insights with automated chemistry and high-resolution data analysis, researchers have been able to move these complex programs from the discovery stage into Phase I and II clinical trials with remarkable speed. This achievement is not merely the result of a single technological breakthrough, but the product of a unified strategy where every department—from engineering to informatics to wet-lab biology—operates as a single, cohesive unit. This multidisciplinary collaboration allows for the rapid identification of specific cancer vulnerabilities and the development of “best-in-class” therapeutics that are tailored to the molecular profile of the disease, demonstrating the tangible impact of an integrated pipeline on patient outcomes.

As the pharmaceutical industry looks toward the future, the lessons learned from the integration of these technologies provide a clear roadmap for the next generation of medical breakthroughs. The shift from isolated tools to interconnected ecosystems has moved the industry into a “mature phase” of technological adoption, where the primary focus is now on the stability, reliability, and connectivity of the entire research pipeline. To remain competitive, organizations must continue to invest in the infrastructure and talent required to maintain these sophisticated workflows, ensuring that their discovery processes are as consistent as they are innovative. The transition toward a more automated, data-driven, and human-relevant approach to drug discovery has already begun to yield significant dividends in terms of reduced costs and accelerated timelines. Moving forward, the continued refinement of these integrated platforms will be the defining factor in the industry’s ability to address the most challenging and complex diseases facing humanity, turning the promise of precision medicine into a practical reality for patients worldwide.