The modern biological revolution is no longer written solely in the sequences of the genome but in the intricate, shifting patterns of proteins that define every breath and heartbeat we experience. For years, the scientific community struggled with a deluge of raw information produced by high-resolution mass spectrometry, often resulting in isolated “data silos” that hindered collective progress. The ProteomeXchange Consortium emerged as a necessary intervention, transforming these fragmented efforts into a cohesive, global infrastructure. This review examines how the consortium has shifted from a mere storage solution to a sophisticated engine for data stewardship, ensuring that every byte of proteomic information is findable and reusable for the broader benefit of medical science.

Unlike earlier attempts at bioinformatic centralization, this framework does not rely on a single, monolithic entity. Instead, it operates as a federated network of specialized repositories, each catering to different regional or technical requirements while adhering to a unified set of protocols. This evolution reflects a broader trend in the technological landscape toward decentralization, where the value of a system is defined by its interoperability rather than its isolation. By providing a standardized gateway for data submission and discovery, the consortium has effectively democratized access to high-tier proteomics research, allowing small laboratories to contribute to and draw from a global pool of knowledge.

The Evolution of Proteomics Data Stewardship

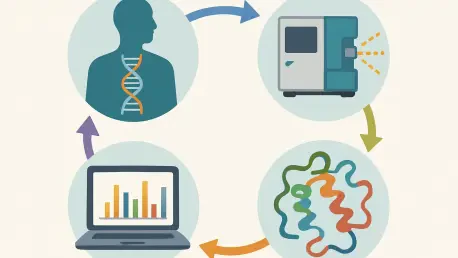

The transition toward a standardized proteomics environment was driven by the realization that raw mass spectrometry data is functionally useless without context. In the early days of the field, researchers often published findings without providing the underlying evidence in a format others could verify. The core principle of ProteomeXchange is the “submission once, spread everywhere” philosophy, which mandates that datasets be accompanied by essential metadata. This context includes everything from the biological source and experimental design to the specific settings of the instruments used, ensuring that results are not just reported but are fundamentally reproducible.

In the current technological landscape, this stewardship is more than a clerical necessity; it is a prerequisite for the survival of the discipline. As datasets grow in size and complexity, the ability to navigate them efficiently has become a competitive advantage for research institutions. The consortium has moved beyond simple archival duties to become a gatekeeper of data quality. This shift ensures that the global research community can rely on a baseline of integrity, preventing the proliferation of “dark data” that remains hidden in proprietary formats or forgotten servers.

Core Infrastructure and Standardization Protocols

The Global Repository Ecosystem

The infrastructure of the consortium is defined by its diverse member repositories, such as PRIDE, MassIVE, and iProX, which serve as the physical homes for the data. Each repository brings a unique strength to the system; for instance, PRIDE acts as the primary hub for general proteomics, while others like jPOST or Panorama Public focus on specific regional needs or targeted experimental workflows. This division of labor prevents any single point of failure and allows for specialized support that a centralized global database could never provide. The performance of this ecosystem is measured by its massive throughput, now handling tens of thousands of datasets with seamless cross-referencing.

What makes this implementation unique is the level of coordination required to keep these independent entities in sync. When a researcher submits a dataset to one repository, a ProteomeXchange ID is issued, which acts as a permanent, citable identifier across all member sites. This ensures that even as repositories update their internal architectures, the link between the scientific publication and the data remains intact. This ecosystem has effectively solved the problem of data fragmentation, creating a unified virtual library that spans continents and jurisdictions.

Metadata Standards and Technical Frameworks

Standardization is the “invisible labor” that makes high-level proteomics possible. The consortium utilizes the Sample and Data Relationship Format (SDRF)-Proteomics to bridge the gap between biological samples and digital outputs. This framework matters because it allows for machine-readable descriptions of experimental conditions, which is essential for any automated analysis. Without these standards, a computer would see a list of protein names without understanding if they came from a healthy heart or a cancerous lung, rendering large-scale comparisons impossible.

Furthermore, the introduction of Universal Spectrum Identifiers (USIs) represents a significant technical milestone. A USI functions as a digital fingerprint for a specific protein spectrum, allowing researchers to point to a exact piece of evidence within a massive dataset. This level of granularity facilitates a new kind of transparency in peer review, where an editor can instantly visualize the specific data point that supports a author’s claim. By implementing these technical frameworks, the consortium has moved the field away from “trust-based” science toward a “verification-based” model, where every conclusion is backed by traceable, high-fidelity evidence.

Emerging Trends in Data-Driven Discovery

One of the most significant shifts in the industry is the transition from data as a byproduct to data as a primary research asset. We are seeing a move toward “re-analysis-first” workflows, where scientists spend more time mining existing public datasets than they do at the laboratory bench. This trend is driven by the sheer volume of available information; with tens of thousands of datasets online, many biological questions can be answered by looking at existing data through a new lens. This shift is not just a change in behavior but a fundamental evolution in how scientific value is generated.

Moreover, there is an increasing emphasis on the “FAIRness” of data—making it Findable, Accessible, Interoperable, and Reusable. Industry leaders are now treating metadata quality as a metric of institutional prestige. As we look toward the 2026 to 2028 window, the focus is expected to shift even further toward automated data curation. The goal is to reach a state where a dataset can be automatically indexed and integrated into global knowledge graphs without human intervention, allowing for a truly frictionless flow of biological information across different scientific domains.

Real-World Applications and Multi-Omics Integration

The true impact of this technology is visible in the rapid acceleration of cancer research and drug discovery. By integrating proteomic datasets with genomic and transcriptomic information—a process known as multi-omics—researchers can see the full picture of how a disease operates. While the genome provides the blueprint, the proteome reveals the actual machinery in motion. This integrated approach has allowed scientists to identify new biomarkers for early disease detection that were invisible when looking at DNA alone, leading to more targeted and effective therapies.

In clinical settings, this integration is being used to personalize patient care. For example, by comparing a patient’s protein profile against thousands of similar profiles in the ProteomeXchange archives, doctors can predict how a specific tumor might respond to a particular chemotherapy drug. These real-world applications demonstrate that the consortium is not just a technical project for bioinformaticians; it is a vital tool for the medical community. The ability to cross-reference experimental data with clinical outcomes is turning proteomics from a descriptive science into a predictive one.

Technical Barriers and Regulatory Hurdles

Despite its successes, the consortium faces significant hurdles, particularly regarding the privacy of human data. As proteomic signatures can be as unique as a fingerprint, sharing detailed protein profiles of patients raises serious ethical and legal concerns under frameworks like the GDPR. The challenge lies in maintaining the principles of open science while protecting individual anonymity. Current development efforts are focused on creating “controlled-access” tiers, where sensitive data is shielded behind a layer of authorization, allowing verified researchers to work with the data without exposing it to the general public.

Another limitation is the platform’s historical focus on mass spectrometry. Newer technologies, such as affinity-based assays, generate data in entirely different formats that the current ProteomeXchange infrastructure was not originally designed to handle. This creates a risk of a new kind of fragmentation, where non-mass spectrometry data is left out of the global ecosystem. To remain relevant, the consortium must rapidly adapt its standards to include these emerging technologies, ensuring that it remains the central hub for all protein-related research regardless of the experimental method used.

Future Outlook: AI Integration and Clinical Expansion

The future of this technology is inextricably linked to the rise of artificial intelligence. High-quality, standardized datasets are the essential fuel for machine learning models that can predict protein structures and interactions with unprecedented accuracy. We are moving toward a period where AI will be used to “clean” and annotate incoming data automatically, correcting errors in metadata and identifying patterns that human researchers might miss. This integration will likely lead to a breakthrough in “digital twin” technology, where a patient’s entire biological system can be simulated in a computer to test treatments before they are administered in real life.

Looking ahead, we can expect the consortium to expand its reach into the clinical routine. As protein sequencing becomes faster and cheaper, hospitals may eventually upload anonymized patient proteomes directly to secure repositories for real-time analysis. This would create a global “early warning system” for new diseases and allow for the rapid sharing of information during public health crises. The long-term impact of this technology will be a world where the molecular details of life are no longer a mystery but a transparent, searchable, and actionable map of human health.

Assessment of the Consortium’s Impact

The ProteomeXchange Consortium functioned as the essential connective tissue for a field that was once defined by its fragmentation. By establishing a culture of transparency and a technical framework for cooperation, the initiative allowed for a level of global collaboration that was previously unimaginable. The transition from simple data hosting to sophisticated stewardship was a critical step in making proteomics a pillar of modern medicine. It became clear that the value of the consortium lay not just in the volume of the data it held, but in the rigorous standards that made that data meaningful for researchers across different disciplines and borders.

The consortium successfully shifted the burden of proof from individual researchers to a verifiable, public record of evidence. This work provided the foundation for the next generation of AI-driven biological discovery and multi-omics integration. While challenges regarding data privacy and the inclusion of new technologies remained, the groundwork laid by the consortium proved to be resilient. Ultimately, the project demonstrated that when the scientific community prioritizes shared standards over proprietary interests, the pace of discovery for the benefit of all humanity is greatly accelerated.