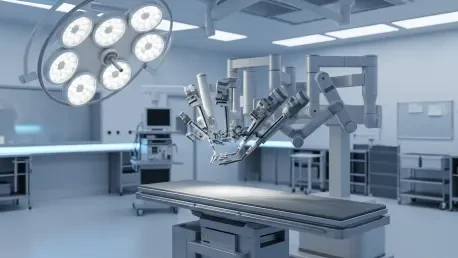

The delicate dance of a robotic scalpel within the human body represents the pinnacle of modern engineering, yet even the most sophisticated systems remain blind to what lies beneath the immediate surface of biological tissue. While the industry has celebrated the transition from invasive open procedures to refined robotic platforms, a persistent “hidden hazard” continues to haunt the operating room. Surgeons currently navigate complex anatomical landscapes using high-definition cameras that provide exceptional surface clarity but offer zero transparency into the subsurface. This limitation often forces medical professionals to rely on intuition and anatomical averages rather than real-time data, leaving critical neurovascular bundles vulnerable to accidental damage. Addressing these surgical blind spots has become the primary focus of research led by Kai Zhang at Worcester Polytechnic Institute. His work, supported by key industry players seeking high-precision instruments, aims to transform how robots perceive the human body, moving the needle from mere mechanical assistance to true cognitive partnership.

The New Frontier of Robot-Assisted Surgery and the Quest for Subsurface Visualization

The current state of minimally invasive surgery is defined by an aggressive shift toward robotic-assisted platforms that promise shorter recovery times and reduced patient trauma. These systems provide a level of dexterity and tremor suppression that far exceeds human capabilities. However, the reliance on laparoscopy introduces a fundamental visual constraint: the camera only sees the “skin” of internal organs. This lack of depth perception regarding internal structures often results in surgeons operating near invisible hazards. When a surgeon cannot distinguish between a layer of benign fat and a critical artery buried millimeters beneath it, the risk of a catastrophic event remains uncomfortably high.

Kai Zhang’s research at Worcester Polytechnic Institute targets this exact vulnerability by introducing an intraoperative guidance system designed to illuminate what was previously obscured. By focusing on subsurface visualization, the research addresses the primary cause of intraoperative complications in pelvic and abdominal surgeries. Major manufacturers in the robotic space are now recognizing that the next generation of instruments must do more than just cut and suturing; they must inform. The push for high-precision, robot-assisted surgical instruments is driving a market-wide demand for integrated sensing technologies that can map the patient’s unique internal landscape in real-time, effectively eliminating the guesswork that has characterized surgery for decades.

Technological Convergence: Merging Photoacoustics with Surgical Robotics

Bridging the Gap Between Optical Clarity and Ultrasonic Depth

The integration of photoacoustic imaging into robotic workflows represents a masterstroke of technological convergence, effectively marrying the resolution of light with the penetration of sound. This process functions by delivering nanosecond-duration pulsed laser light through a fiber optic probe to the surgical site. As this light enters the tissue, it is absorbed by specific chromophores, such as hemoglobin in the blood. This absorption triggers a rapid, localized thermal expansion, which generates ultrasonic waves that travel back through the tissue. Because ultrasound waves scatter significantly less than light when passing through dense biological structures, the system can provide deep-tissue imaging with remarkable clarity, identifying neurovascular bundles that are otherwise invisible to the standard laparoscopic lens.

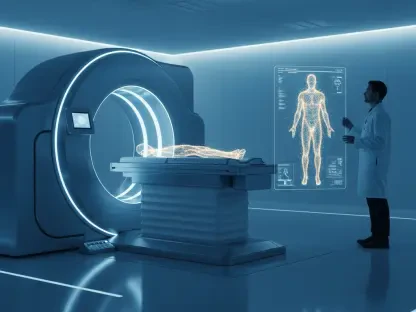

The evolution of miniaturized photoacoustic probes has allowed these sensors to fit within the standard trocars used in robot-assisted surgery. When these probes are paired with augmented reality interfaces, the resulting “augmented vision” allows the surgeon to see a 3D digital overlay of the patient’s vascular and nervous systems directly on the live surgical feed. This creates a navigational map that updates as the surgeon moves, ensuring that every incision is informed by the precise location of underlying structures. This shift in clinician behavior is profound, as surgeons move away from a reactive posture toward a proactive, data-driven approach where every movement is verified by subsurface feedback.

Quantitative Impact of Minimizing Surgical Complications and Market Expansion

From a financial and clinical perspective, the economic burden of surgical errors is staggering, with accidental vascular damage occurring in approximately 1% to 2% of laparoscopic cases. While these percentages may seem small, they represent thousands of incidents annually that lead to prolonged hospital stays, secondary surgeries, and significant legal liabilities for healthcare providers. Data from 2026 suggests that the integration of subsurface imaging can reduce these error rates by more than half, drastically improving the economic viability of robotic programs. Market projections for the 2026 to 2030 period indicate that integrated imaging modalities will be the fastest-growing segment of the global surgical robotics market, as hospitals prioritize systems that offer demonstrable safety advantages.

Performance indicators suggest that patient recovery times are significantly shorter when subsurface injuries are avoided, primarily because the body does not have to recover from unintended trauma or hemorrhaging. Furthermore, the adoption of hybrid optical-acoustic systems is expected to become the gold standard for radical prostatectomies and complex pelvic surgeries, where the margin for error is particularly slim. As healthcare systems globally move toward value-based care models, the ability to avoid complications through superior visualization becomes a key differentiator for medical institutions seeking to optimize patient outcomes and minimize long-term morbidity costs.

Overcoming Structural Obstacles in High-Stakes Clinical Environments

The path to widespread adoption is not without technological hurdles, particularly regarding the real-time processing of complex signals. The raw data generated by photoacoustic sensors is immense, and converting these signals into a clear 3D model without latency requires significant computational power. Miniaturizing the necessary laser-acoustic hardware so that it does not impede the surgeon’s range of motion remains a secondary challenge. To be effective, the system must integrate seamlessly into the existing surgical workflow, meaning the probe must be easily maneuverable and the data interpretation must be automated so that it does not distract the surgical team from the primary task at hand.

Strategically, the industry is moving toward digital landmarks and automated modeling to simplify the user interface. By using artificial intelligence to interpret raw data and highlight “no-go zones,” the system reduces the cognitive load on the surgeon. This automation is vital for maintaining efficiency in high-stakes environments where every second counts. Moreover, the presence of real-time subsurface mapping acts as a safety net, mitigating the risks of sudden transitions to open surgery caused by accidental vascular severing. When a surgeon knows exactly where a major vessel lies, they can navigate around it with confidence, maintaining the minimally invasive nature of the procedure and ensuring a higher standard of care.

Navigating the Regulatory Landscape for Next-Generation Medical Devices

Ensuring compliance with international standards for laser safety and ultrasonic imaging is a critical step in the commercialization of these augmented vision tools. Regulatory bodies such as the FDA have established rigorous protocols for intraoperative monitoring, particularly when high-energy lasers are involved in close proximity to sensitive tissue. Manufacturers must demonstrate that the heat generated by the photoacoustic process is negligible and poses no risk of thermal injury to the patient. Additionally, as these robotic systems become more connected, data security and the protection of patient-specific anatomical maps have become central themes in the regulatory discourse.

Clinical validation through peer-reviewed proof-of-concept studies remains the primary vehicle for gaining regulatory approval and professional trust. The role of standardized safety regulations cannot be overstated, as they provide a framework for the consistent manufacturing and deployment of these complex tools. By adhering to these protocols, developers ensure that the technology is not only effective but also universally safe across different surgical environments. The impact of these regulations will be seen in the streamlined adoption of hybrid systems, as clear safety benchmarks allow hospital boards to make informed investment decisions regarding the next generation of “intelligent” surgical suites.

The Roadmap Toward Fully Informed Precision Medicine

The trajectory of surgical robotics is moving clearly from “blind” mechanical precision toward a model of “informed” anatomical intelligence. The integration of multi-modal data, where photoacoustic imaging is combined with preoperative CT or MRI scans, represents the next frontier in surgical planning. This convergence allows for a degree of precision medicine that was previously theoretical. Potential disruptors in this space include the use of artificial intelligence to not only map the tissue but to predict the most efficient surgical path based on the patient’s specific anatomy. This evolution will likely expand the use of photoacoustic imaging into abdominal, thoracic, and even complex neurological procedures where subsurface clarity is paramount.

Future growth areas will be influenced by global economic conditions and the ongoing modernization of healthcare infrastructure. As high-tech surgical suites become more accessible, the demand for precision tools that reduce the risk of human error will increase. The transition to informed precision will likely redefine the role of the surgeon from a manual operator to a director of an intelligent system. This paradigm shift ensures that surgery is no longer a battle against the unknown but a controlled execution of a highly detailed, data-rich plan.

Redefining the Safety Paradigm Through Augmented Surgical Intelligence

The integration of photoacoustic imaging into the robotic surgical landscape effectively eliminated the era of intraoperative surprises. By providing a clear window into the subsurface anatomy, this technology transformed the surgical console from a simple viewing screen into a comprehensive navigational hub. The research conducted at Worcester Polytechnic Institute demonstrated that the transition from traditional 2D visualization to 3D navigational maps was not only possible but essential for the advancement of patient safety. The industry moved toward a standard where surgeons no longer had to choose between the precision of a robot and the sensory feedback of open surgery; they were finally able to have both.

Healthcare providers who invested in these hybrid imaging technologies observed a marked reduction in long-term patient morbidity and a decrease in surgical complications. The outlook for the intelligent operating room became one of total transparency, where the instruments themselves acted as the first line of defense against accidental injury. As these systems became more refined, they set the stage for a future where surgical outcomes were dictated by data and clarity rather than the limitations of human sight. The successful deployment of augmented vision tools ultimately proved that the best way to ensure safety was to make the invisible visible, forever changing the expectations for robotic precision in modern medicine.