The current pharmaceutical landscape is defined by a paradox where clinical breakthroughs are stifled by an economic model that demands billions of dollars for every successful market entry. While the global population faces emerging health threats and aging-related chronic conditions, the traditional pipeline for developing new medicines remains bogged down by a slow, iterative process of trial and error that lacks the precision required for modern molecular science. This persistent inefficiency has pushed the industry toward a critical juncture, where the integration of advanced artificial intelligence and burgeoning quantum computing technologies is no longer seen as a luxury but as a survival mechanism. By transitioning from physical experimentation to a predictive digital framework, researchers are attempting to map the vast complexity of chemical space with unprecedented accuracy. This fundamental shift promises to dismantle the barriers that have long prevented the discovery of effective treatments, signaling the start of an era where digital simulations precede and refine every laboratory action.

The Economic Strain: Bridging the Predictive Gap

The financial reality of drug development has reached a point of near-unsustainability, with the average cost of moving a single molecule from the laboratory to the pharmacy shelf now exceeding several billion dollars. This astronomical investment is paired with a timeline that often spans more than a decade, leaving patients and healthcare systems waiting for solutions that may never arrive due to the inherent risks involved. A central component of this crisis is the staggering ninety percent failure rate observed during human clinical trials, where promising candidates frequently fail to demonstrate efficacy or safety. Most of these setbacks occur because scientists struggle to anticipate how a synthetic compound will behave once it is introduced into the intricate biological environment of a living organism. This predictive gap highlights a fundamental limitation in current methodologies, which rely on approximations rather than a granular understanding of molecular interactions at the most basic level.

Traditional computing architectures, while powerful, are fundamentally unequipped to handle the specialized mathematics required to simulate the subatomic physics of a drug binding to its target. Because these classical systems operate on binary logic, they must use simplified models to represent the behavior of electrons and atoms within a protein, which often leads to inaccurate results that only become apparent late in the development cycle. This lack of simulation fidelity forces pharmaceutical companies to invest heavily in physical synthesis and wet-lab testing for thousands of candidates that are destined to fail. By the time a molecule reaches Phase II or Phase III trials, hundreds of millions of dollars have already been committed, making every late-stage failure a significant blow to the research budget. Addressing this gap requires a move toward technologies that can actually mimic the quantum mechanical nature of reality, allowing for a more rigorous and reliable screening process before any physical compounds are ever manufactured.

Computational Evolution: From Pattern Recognition to Quantum Logic

Artificial intelligence has already revolutionized several aspects of early-stage research by identifying hidden patterns within massive datasets and predicting the complex three-dimensional shapes of proteins with tools like AlphaFold. These generative models allow scientists to scan vast libraries of chemical structures and propose novel candidates at a pace that would be impossible for human researchers to achieve alone. However, even the most sophisticated neural networks eventually encounter a computational wall when they attempt to describe the dynamic forces at play during a molecular collision. While AI is exceptional at making predictions based on historical data and observed patterns, it cannot derive the underlying physical laws that govern a completely new interaction. This limitation means that even if an AI proposes a high-potential molecule, the actual binding affinity and stability of that compound remain uncertain until they are verified through physical experiments or more advanced computational means.

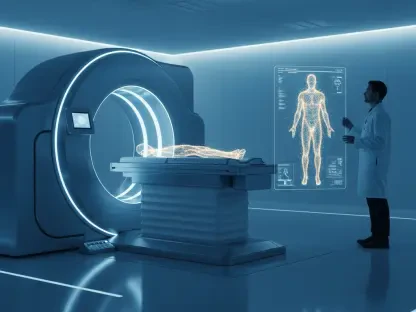

This is where the transition to quantum systems becomes essential, as these machines utilize the principles of superposition and entanglement to represent information in a way that aligns with the laws of physics. Unlike classical bits, quantum bits can exist in multiple states simultaneously, providing the necessary processing power to solve the complex equations that describe how subatomic particles interact in real time. By adopting this new logic, researchers can perform high-fidelity simulations of molecular docking that were previously considered impossible to compute. This capability allows for the analysis of electronic configurations and energy levels with a precision that classical AI models simply cannot match. Consequently, the industry is moving toward a state where the “black box” of molecular behavior is replaced by a transparent and mathematically sound digital twin. This evolution represents a departure from the heuristic-based approach of the past, paving the way for a more deterministic methodology.

Practical Applications: Implementing the Hybrid Discovery Pipeline

The most promising current strategy involves a tightly integrated hybrid pipeline where AI and quantum processors work in a symbiotic relationship to maximize efficiency. In this modernized workflow, classical AI handles the initial heavy lifting by analyzing genomic data to identify disease targets and generating a wide array of potential drug structures that might interact with those targets. Once this broad field is narrowed down, quantum algorithms, such as the Variational Quantum Eigensolver (VQE), are deployed to perform deep-dive simulations on the top-tier candidates. This specific algorithm allows scientists to calculate the minimum energy state of a molecular system, providing a definitive answer on whether a drug will bind effectively to a protein without causing unintended side effects. By filtering out low-probability molecules in a virtual environment, organizations can focus their physical laboratory resources on the most viable options, significantly reducing the overhead associated with failed synthesis.

The implementation of these combined technologies demonstrated a clear path toward reducing discovery timelines by nearly half, which saved billions in development costs and expedited patient access to medicine. Industry leaders focused on securing strategic partnerships with quantum hardware providers and investing in specialized talent to bridge the gap between software engineering and molecular biology. Policymakers also recognized the importance of this shift by establishing national quantum centers and ensuring that high-quality health data remained accessible for researchers while maintaining strict privacy standards. Pharmaceutical companies that embraced this digital evolution early established a significant competitive advantage in the global market, securing patent positions that redefined the landscape of personalized medicine. Moving forward, the focus shifted toward scaling these fault-tolerant systems and integrating real-world clinical data back into the simulation loop to further refine predictive accuracy.