Ivan Kairatov is a distinguished biopharma expert with a career defined by bridging the gap between cutting-edge technology and clinical application. With extensive experience in research and development, he has spent years investigating how artificial intelligence can move beyond simple automation to become a true collaborative partner for medical professionals. His deep understanding of the intricacies involved in diagnostic imaging and therapeutic innovation makes him a leading voice in the evolution of modern healthcare.

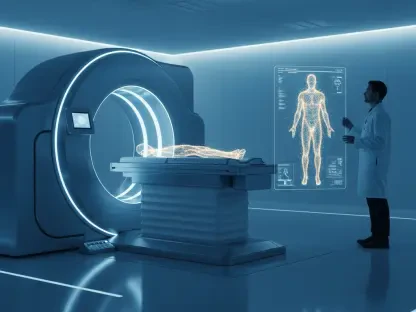

The following discussion explores a revolutionary shift in lung cancer diagnostics, moving away from rigid binary classifications toward a more fluid, interactive visual question-answering framework. This conversation delves into the technical complexities of converting clinical data into natural language, the role of explainability in building physician trust, and the profound impact these tools have on patient safety, particularly for those unable to undergo invasive procedures.

Many diagnostic AI tools focus solely on binary classifications of lung nodules as either benign or malignant. How does shifting toward a visual question answering framework change the daily workflow for a radiologist, and what specific morphological features like margin or spiculation become easier to track?

The shift from a “black box” binary output to a visual question-answering (VQA) framework is transformative because it mirrors the natural cognitive process of a radiologist. Instead of simply receiving a high-risk or low-risk notification, a clinician can now engage in a dialogue with the system to probe specific morphological characteristics. Features such as the sharpness of a nodule’s margin or the presence of spiculation—those tiny, needle-like extensions that often signal malignancy—become much easier to track because the AI highlights them as part of a descriptive finding. By focusing on these granular details, the workflow moves from passive acceptance of an AI’s score to an active, evidence-based verification. This transparency allows a physician to see exactly why the model is flagging a concern, which significantly reduces the mental fatigue associated with second-guessing a machine’s hidden logic.

Converting structured clinical annotations into natural language descriptions presents unique technical hurdles. What were the primary challenges in training a model to maintain consistency across features like sphericity and texture, and how do metrics like the CIDEr score reflect the clinical utility of these generated findings?

One of the most significant challenges in this research was ensuring that the model could translate rigid, structured data from databases like LIDC-IDRI into a narrative that sounds like it was written by a human expert. To achieve this, the team had to fine-tune a vision-language model that could maintain strict consistency across diverse features like sphericity, texture, and calcification without losing the nuance of clinical language. We saw the success of this approach reflected in a CIDEr score of 3.896, which is an exceptionally high mark for linguistic accuracy and contextual relevance in a specialized medical domain. This score isn’t just a technical benchmark; it represents the model’s ability to provide findings that are reliable enough for a doctor to incorporate directly into a patient’s medical record. When the AI can consistently describe the internal structure of a nodule with such high agreement with reference descriptions, it builds the foundation of trust necessary for clinical adoption.

Clinical diagnosis often involves significant variability depending on a physician’s specific level of expertise. In what ways can interactive, AI-generated findings serve as a training resource for junior doctors, and how does the ability to ask targeted questions about a nodule’s internal structure improve the speed of report writing?

This interactive capability serves as a powerful pedagogical tool by acting as a digital mentor for junior doctors and residents who are still honing their diagnostic “eye.” By allowing a trainee to ask targeted questions about a lesion’s lobulation or internal density, the system reinforces the specific visual cues they should be looking for in a complex CT scan. This real-time feedback loop helps standardize the diagnostic process, ensuring that a less experienced physician reaches the same high-quality conclusion as a veteran radiologist. Furthermore, the ability to generate natural language findings drastically accelerates the report-writing process, which is often a bottleneck in hospital operations. Instead of starting from scratch, the clinician can use the AI-generated findings as a robust draft, refining the nuances rather than typing out every morphological detail, thereby saving precious time during a busy shift.

Invasive procedures like biopsies are often high-risk for patients with certain underlying medical conditions. How could this visual question-answering technology bridge the gap for those unable to undergo surgery, and what practical steps must be taken to ensure these AI interpretations are reliable enough for high-stakes decision-making?

For many patients, particularly the elderly or those with severe respiratory issues, the “gold standard” of a biopsy is simply too dangerous due to the risk of complications like a collapsed lung. This technology provides a non-invasive lifeline by extracting a level of detail from CT images that was previously difficult to document without surgical intervention. By making the AI’s reasoning transparent through a question-and-answer format, we provide clinicians with the confidence to make high-stakes management decisions based on imaging alone. To ensure this reliability, it is vital to continue validating these models against massive, diverse datasets to eliminate any potential bias in how the AI interprets rare nodule types. We must also implement strict oversight protocols where the AI’s descriptive findings are treated as a “second opinion” that must be formally signed off by a certified specialist before any treatment plan is altered.

Moving toward a data-driven healthcare system requires the seamless integration of advanced diagnostic tools into existing hospital workflows. What infrastructure is necessary to support a collaborative environment between AI and clinicians, and how might this approach eventually standardize diagnostic practices across different medical networks?

To truly integrate these VQA systems, hospitals need a robust digital infrastructure that can handle the high computational load of vision-language models without introducing latency into the radiologist’s viewer. This involves more than just hardware; it requires interoperable software standards that allow the AI to pull from and push to various electronic health record systems seamlessly. When these tools are deployed across different medical networks, they act as a “common denominator” for diagnosis, ensuring that a patient in a rural clinic receives the same depth of analysis as one in a major urban research hospital. Over time, this creates a standardized data-driven ecosystem where diagnostic terminology and criteria are applied consistently, regardless of where the scan was performed. This level of uniformity is the ultimate goal for improving global patient outcomes and making healthcare more equitable.

What is your forecast for the future of lung cancer diagnostics?

My forecast for lung cancer diagnostics is a move away from isolated snapshots and toward a continuous, multi-modal diagnostic journey where AI acts as a persistent co-pilot. Within the next decade, I expect we will see these visual question-answering systems integrated with genomic data and liquid biopsy results, providing a 360-degree view of a patient’s health in a single interactive dashboard. We will transition from merely detecting nodules to predicting their growth trajectories with incredible precision, allowing for “watchful waiting” periods that are guided by data rather than anxiety. Ultimately, the future lies in a truly collaborative environment where the machine handles the complex pattern recognition and the physician focuses on the human element of care, leading to a much more personalized and effective treatment paradigm.