Imagine a future where robots can master new skills simply by watching a short clip on a smartphone, without the need for complex coding or high-end equipment, and this isn’t science fiction but a reality being shaped by a groundbreaking framework. Developed by researchers at the University of Illinois Urbana-Champaign, in collaboration with experts from Columbia University and UT Austin, this “Tool-as-Interface” approach allows robots to learn intricate tool-use tasks by observing humans in everyday videos, much like how children pick up behaviors by watching adults. This development holds the promise of revolutionizing robotics, making machines far more adaptable to the unpredictable nature of real-world environments. By breaking free from the constraints of rigid programming, this method paves the way for robots to tackle dynamic challenges with ease, potentially transforming industries and households alike with their newfound flexibility and learning capacity.

Breaking the Mold of Traditional Robotics

The field of robotics has long grappled with a significant limitation: most machines are confined to repetitive tasks for which they are explicitly programmed, rendering them inflexible when faced with new or unexpected scenarios. Traditional approaches to teaching robots often involve labor-intensive reprogramming or the use of specialized hardware, both of which consume substantial time and resources. The “Tool-as-Interface” framework shatters these barriers by introducing a learning mechanism that relies on visual observation. By analyzing ordinary video footage captured with basic devices like smartphones, robots can acquire new skills without the need for elaborate setups. This shift not only streamlines the training process but also addresses a critical gap in robotic adaptability, enabling machines to respond to changing conditions in a way that was previously unattainable through conventional methods.

Moreover, this framework redefines the way robotic learning is approached by prioritizing accessibility. Unlike older systems that depend on expert intervention or costly motion capture technology, this method leverages widely available video content to impart complex abilities. The implications are profound, as it reduces the technical barriers that have historically limited robotic deployment in varied settings. Tasks that once required weeks of programming can now potentially be taught in a fraction of the time, allowing robots to integrate more seamlessly into environments where flexibility is key. This breakthrough marks a turning point, suggesting that the future of robotics may lie in mimicking human observational learning rather than relying solely on rigid, predefined instructions.

Unpacking the Technology Behind the Innovation

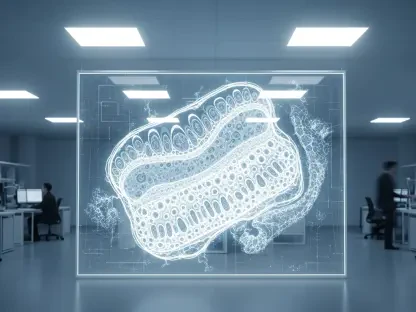

At the core of the “Tool-as-Interface” framework lies a sophisticated process that begins with capturing a scene from two distinct camera angles to construct a detailed three-dimensional (3D) model, utilizing a vision system called MASt3R. This model is then enhanced through a rendering technique known as 3D Gaussian splatting, which generates additional perspectives of the task, ensuring a comprehensive understanding of the action. What sets this approach apart is its focus on the tool itself—using a system called Grounded-SAM, the human presence is digitally removed from the footage, allowing the robot to concentrate solely on the tool’s trajectory and orientation. This method ensures that the learning process isn’t tied to human-specific movements, making it broadly applicable across different robotic forms.

This tool-centric perspective is a game-changer for robotic versatility. By isolating the tool’s role in a task, the framework enables skills to be transferred to robots regardless of their physical design, whether they operate with arms, grippers, or other mechanisms. Such adaptability overcomes a major hurdle in robotics, where skills learned on one machine often fail to translate to another due to differences in structure. The technology’s reliance on minimal equipment further amplifies its potential, as it eliminates the need for advanced hardware that might not be accessible to all developers. As a result, this system not only enhances learning efficiency but also broadens the scope of robotic applications, setting a new standard for how machines can be taught to interact with their surroundings.

Demonstrating Success Through Complex Tasks

To validate the effectiveness of this framework, researchers tested it on a series of demanding tasks that require precision, speed, and adaptability—qualities often beyond the reach of traditional robotic systems. These challenges included hammering a nail, scooping a meatball, flipping food in a pan, balancing a wine bottle, and kicking a soccer ball into a goal. Each task presented unique difficulties, yet the robots demonstrated remarkable competence, achieving a 71% higher success rate compared to conventional teleoperation methods. Additionally, the data collection process was 77% faster, underscoring the efficiency of learning through observation rather than manual programming or direct control.

Beyond the impressive statistics, these tests highlight the practical implications of the technology. The ability to handle dynamic tasks—such as adjusting to unexpected changes mid-action—shows that robots equipped with this framework can operate in real-world scenarios where conditions are rarely static. For instance, managing to scoop additional items tossed into a pan during a task illustrates a level of responsiveness that traditional systems struggle to match. This performance not only validates the framework’s design but also suggests that robots could soon take on roles requiring nuanced interaction with their environment, pushing the boundaries of what automated systems are capable of achieving in everyday settings.

Drawing Inspiration from Human Learning

A striking aspect of this research is its foundation in human behavior, specifically how children learn to use tools by observing adults without needing identical equipment or explicit guidance. In a similar vein, the “Tool-as-Interface” framework enables robots to grasp the core principles of a task by watching human actions, adapting those principles to their own capabilities. This observational learning bypasses the need for step-by-step instructions or tailored tools, mirroring the intuitive process through which humans develop skills naturally over time. It’s a powerful analogy that underscores the potential for robots to evolve beyond mechanical repetition into more organic, flexible learners.

This human-inspired approach also speaks to a deeper goal of reducing the engineering burden in robotic development. By allowing machines to learn in a manner akin to a child picking up a new skill, the framework minimizes the need for constant human oversight or intricate programming. The result is a system where robots can independently build their skill set, adapting to new tools or environments with far less intervention. Such a paradigm not only enhances autonomy but also aligns robotic learning with natural processes, offering a glimpse into a future where machines might integrate into human spaces with an unprecedented level of ease and understanding.

Envisioning a New Era of Robot Training

Looking forward, the implications of this framework extend far beyond academic research, pointing to a transformative shift in how robotic skills are developed. With the ability to learn from widely accessible video content—think smartphone recordings or online tutorials—robot training could become a democratized process, no longer confined to specialized labs or expert teams. This accessibility could empower small businesses, educators, and even individuals to teach robots for specific needs, from assisting in homes to supporting industrial operations. The potential to tap into vast online resources for learning materials further amplifies this vision, making advanced robotics a more inclusive field.

Additionally, the scalability of this approach offers exciting possibilities for widespread adoption. As video content continues to grow exponentially, robots could continuously expand their capabilities by accessing an ever-growing library of human knowledge. This could lead to a future where machines evolve alongside human innovation, learning new techniques as they emerge without requiring costly updates or redesigns. While still in its early stages, the framework lays the groundwork for a robotic landscape where adaptability and learning are as commonplace as they are in human society, reshaping how technology integrates into daily life.

Navigating the Roadblocks Ahead

Despite its promise, the framework isn’t without challenges that must be addressed to realize its full potential. Current limitations include difficulties in handling non-rigid tools, such as ropes or fabrics, where flexibility complicates trajectory analysis. Errors in 6D pose estimation—determining a tool’s precise position and orientation in space—also pose hurdles, as do inaccuracies in rendering realistic views from extreme camera angles. These issues can impact the reliability of learned skills, particularly in complex or unconventional tasks. Researchers acknowledge these gaps and are committed to refining the system for greater robustness.

Tackling these obstacles will require innovative solutions, such as improving vision algorithms to better account for deformable objects or enhancing rendering techniques for more accurate perspectives. The ongoing work in this area reflects a pragmatic approach, balancing enthusiasm for the framework’s capabilities with a clear-eyed view of its current shortcomings. Addressing these technical challenges is crucial for scaling the technology to handle a wider range of real-world applications, ensuring that robots can operate with precision and reliability in diverse, unpredictable settings. The path forward, though complex, holds immense potential for further breakthroughs.

Pioneering a Path to Adaptive Machines

Reflecting on this research, it’s evident that a significant shift has occurred in the field of robotics with the introduction of observation-based learning. The recognition of the “Tool-as-Interface” framework through prestigious accolades at a major robotics workshop underscores its impact, affirming a growing consensus that mimicking human learning processes is a vital step toward overcoming longstanding limitations. Robots that once struggled with dynamic environments now show remarkable adaptability, thanks to their ability to learn from human actions captured in simple videos.

Looking ahead, the next steps involve not only refining the technical aspects but also exploring how this technology can be integrated into practical, everyday solutions. Stakeholders in robotics are encouraged to invest in scaling this approach, ensuring that challenges like non-rigid tool handling are addressed through collaborative innovation. The ultimate goal remains clear: to develop machines that can learn and adapt as fluidly as humans, transforming them into true partners in navigating the complexities of the modern world.