The High Stakes of the Alzheimer’s Waiting Game

A diagnosis of Alzheimer’s Disease often feels like being handed a map with the ink still wet, leaving families to navigate a landscape where the landmarks of memory and independence could vanish tomorrow or remain for a decade. This profound uncertainty triggers a period of waiting and watching that is as emotionally taxing as the physical symptoms themselves. While some patients maintain their cognitive faculties and social identities for years, others face a devastating decline in a matter of months. Historically, clinicians have lacked the specific tools to distinguish between these drastically different trajectories at the point of initial diagnosis.

Traditional medical models have long relied on “average” progression rates, yet these statistical generalizations fail to capture the unique, lived reality of the individual patient. Because every brain is different and every life story unique, a one-size-fits-all prognosis provides little comfort or practical utility. The quest for a precise, 12-month crystal ball has therefore become one of the most urgent frontiers in modern neurology, as understanding the immediate future allows for a shift from reactive crisis management toward proactive, personalized care.

The Crisis of Unpredictability in Dementia Care

With nearly 60 million people currently living with dementia—a figure that is set to double by 2050—the inability to forecast disease progression is evolving into a significant public health crisis. The sheer scale of the population affected means that the standard medical approach must become more efficient and accessible. Currently, providing an accurate prognosis often requires expensive neuroimaging or invasive spinal taps to identify specific proteins. These methods remain inaccessible to many patients in standard clinical settings, creating a “prognostic gap” that leaves millions in a state of limbo.

This gap has tangible consequences beyond the clinic walls, as families find themselves unable to plan for essential transitions such as financial guardianship, professional home care, or end-of-life decisions. When a family cannot know whether a patient will remain stable for the next year, they often delay difficult but necessary conversations. There is a desperate need for scalable tools that utilize the routine data already being collected in neighborhood clinics, turning the information found in standard checkups into a roadmap for the months ahead.

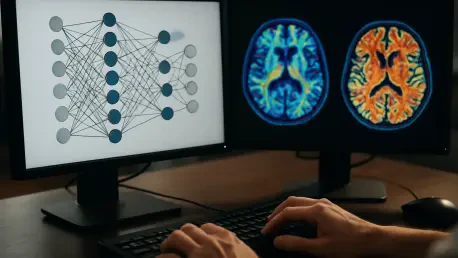

Decoding the Trajectory: How Algorithms Analyze Decline

Machine learning is currently transforming “messy” clinical data into actionable insights by identifying subtle patterns that the human eye might overlook during a standard cognitive checkup. Rather than relying on high-tech, expensive biomarkers, new digital models utilize demographic information and standard cognitive assessments like the Mini-Mental State Examination. These algorithms are designed to look past the final score of a test to see the nuanced performance of the patient, finding predictive value in the specific ways a person interacts with the world.

Researchers have discovered that looking at specific sub-tasks—such as the ability to recall a specific word or plan a complex physical task—is far more predictive of future decline than a patient’s total test score. By analyzing these granular details, machine learning can detect the early signals of a coming “drop” in function before it manifests in a broader sense. Advanced models trained on patient data from the United Kingdom have already successfully predicted decline in cohorts from the United States. This cross-border validation proves that these digital tools remain robust across different healthcare systems and diverse populations, making them a truly global solution.

The accuracy metrics of these systems are particularly promising for the field of neurology. Recent studies indicate that machine learning can explain up to 74% of the variance in cognitive decline over a 12-month period. This level of precision far exceeds the general rules of thumb that have guided medical practice for decades. By quantifying the likelihood of decline with such high resolution, technology is providing a foundation of evidence that allows doctors to speak with newfound confidence regarding a patient’s near-term outlook.

From Black Box to Bedside: Expert Insights and Interpretability

The successful integration of artificial intelligence into the delicate doctor-patient relationship depends entirely on transparency and the ability of a clinician to explain the reasoning behind a prediction. If a model acts as a “black box,” it provides little value to a medical professional who must justify care decisions to a grieving family. Tools such as “Theia” utilize Shapley Additive Explanations to show exactly which factors, such as a struggle with personal finances or a failure in word recognition, are driving the 12-month forecast.

Experts in the field argue that moving from total scores to item-level data allows for a more nuanced understanding of a patient’s unique vulnerabilities. This shift in the medical perspective highlights the importance of individual symptoms over broad labels. While medical history and comorbidities like heart disease or diabetes are important factors in general health, research suggests that a patient’s current cognitive performance on specific tasks remains the most potent predictor of near-term failure. This insight allows doctors to focus their attention on the most critical indicators of immediate risk.

Bridging the Gap: Strategies for Precision Forecasting

Implementing machine learning in a clinical setting required a structured framework to ensure that raw data was translated into better patient outcomes. Clinicians focused on recording the detailed results of cognitive tasks rather than just the final summed score to feed more accurate data into predictive models. This shift toward meticulous documentation provided the “fuel” necessary for the algorithms to perform at their peak. By capturing the nuances of a patient’s performance, the medical community took the first step toward a more data-driven future.

Healthcare providers utilized the model’s findings to monitor “red-flag” indicators of rapid decline, such as a sudden loss of ideational praxis or increasing difficulty in preparing food. These specific markers served as early warning systems, allowing for the development of personalized care timelines. These forecasts were then used to trigger specific interventions, such as early referrals for home care or adjustments in financial management support, before a crisis occurred. This proactive stance significantly improved the quality of life for both patients and their caregivers.

The final strategy for scaling these advancements involved the deployment of software-based tools that ran on existing electronic health record systems. This ensured that even under-resourced clinics offered a precision prognosis without needing to invest in new, expensive hardware. By focusing on low-tech solutions that maximized the utility of existing data, the medical field successfully bridged the gap between advanced technology and the everyday needs of the patient. These steps collectively transformed the landscape of Alzheimer’s care, replacing uncertainty with a clearer vision of the path ahead.