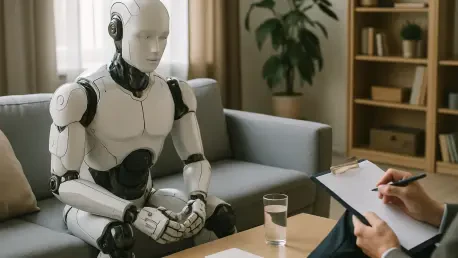

The quiet rustle of a notepad and the empathetic gaze of a clinician have long defined the sanctuary of the therapy room, yet this intimate space is now witnessing a profound technological shift as artificial intelligence enters the clinical conversation. While the sacred bond between therapist and patient was once considered the final frontier for automation—a realm where human nuance and emotional resonance reigned supreme—the rapid evolution of large language models is challenging that assumption. A multidisciplinary team at the University of Utah is now moving past the polarizing debate of “human versus machine” to introduce a sophisticated organizational structure that redefines how mental health professionals and AI can coexist. This framework serves as a critical guide for an industry grappling with an unprecedented surge in demand and a chronic shortage of qualified providers.

The introduction of high-level machine learning into clinical practice is not merely about replacing human interaction but about creating a sustainable infrastructure for a field under immense pressure. By categorizing the levels of autonomy and involvement, the researchers provide a roadmap that balances the promise of innovation with the necessity of patient safety. This effort recognizes that while technology can process data at an inhuman speed, the delivery of psychological care requires a delicate touch that machines are only beginning to simulate. The story of AI in psychotherapy is shifting from a futuristic concept into a practical, tiered reality that aims to augment the clinician’s capability rather than erase their role entirely.

The Digital Shift in the Therapist’s Chair

The transition toward digital integration in mental health care marks a pivotal moment where linguistic nuance meets algorithmic precision. For decades, the therapeutic process remained largely insulated from the waves of automation that transformed other sectors of healthcare, primarily due to the belief that empathy was a uniquely human trait. However, the current landscape of large language models has dissolved this boundary, forcing a reconsideration of what it means to be “present” in a session. The University of Utah’s team, led by experts in engineering and psychiatry, suggests that the focus should not be on whether a machine can feel, but on how a machine can facilitate a more effective healing process between two humans.

This shift does not imply a future of “robot therapists” operating in isolation; instead, it proposes a middle ground that preserves the human touch while leveraging digital efficiency. The framework identifies the nuances of the therapeutic relationship, acknowledging that while an AI can track patterns in speech or suggest interventions, the weight of a shared human experience remains the bedrock of traditional practice. By moving toward this integrated model, health systems can begin to address the systemic failures of the past, using technology to bridge the gap between clinical theory and actual patient outcomes.

Bridging the Gap Between Mental Health Demand and Provider Capacity

The global mental health infrastructure is currently facing a breaking point, driven by a surge in demand that far outstrips the number of available clinicians in the workforce. This scalability crisis is exacerbated by the fact that traditional methods for training and supervising therapists remain manual, labor-intensive, and notoriously slow. In a typical training scenario, an expert might spend weeks reviewing a single recorded session to provide feedback, a pace that is fundamentally incompatible with the urgent needs of modern society. Without a more efficient way to oversee quality, the growth of the mental health workforce will continue to lag behind the population’s requirements.

Beyond the sheer volume of patients, health systems struggle with the consistency problem, where the quality of care can vary significantly depending on the individual provider’s adherence to evidence-based protocols. AI-driven tools offer a solution by identifying where automation can handle administrative or evaluative burdens, such as tracking patient progress or documenting session notes. By offloading these time-consuming tasks to intelligent systems, organizations can redirect human expertise toward high-acuity cases and the complex emotional support that requires a deep level of intuition. This strategic resource allocation is essential for maintaining a high standard of care in an era of scarcity.

The Continuum of Automation: A Four-Tiered Model

Borrowing logic from the automotive industry’s levels of self-driving technology, the researchers have established a spectrum that categorizes AI’s involvement in the clinical process. This four-tiered model allows practitioners to distinguish between minor assistance and full autonomy, providing a clear vocabulary for risk assessment. At the foundational level, Category A focuses on scripted systems and targeted outreach. These systems act as intelligent delivery mechanisms for content prewritten by human experts, functioning as chatbots that follow rigid decision trees to provide coping strategies. This ensures high safety and eliminates the risk of “hallucinations,” though it sacrifices conversational flexibility for clinical reliability.

As the involvement deepens, Category B positions AI as a clinical evaluator, or a “silent observer” that reviews transcripts after the fact to provide instant feedback on a therapist’s performance. Category C introduces the “AI Co-Pilot,” which offers real-time support during sessions by suggesting interventions or summarizing histories while the human clinician retains full control. The most advanced and controversial tier, Category D, involves direct autonomous therapeutic engagement where the AI generates its own responses in a generative, real-time conversation. While this offers the highest level of accessibility, it also introduces the greatest risk, as the machine may deviate from established evidence-based practices without immediate human oversight.

Expert Insights into High-Stakes Environments and Ethics

The research highlights how AI is already being rigorously tested in “no-fail” scenarios, such as the SafeUT initiative, which monitors Utah’s statewide crisis line. In these environments, every word can impact a life-saving outcome, and researchers are developing tools to evaluate text-based sessions to ensure counselors use the most effective techniques during critical “talk turns.” This high-stakes application demonstrates that AI can be a powerful ally in risk mitigation, provided the models are specifically trained for clinical contexts. However, experts warn against the use of general-purpose models like ChatGPT for therapy, noting that these systems are designed for engagement rather than clinical efficacy and may reflect societal biases.

The ethical considerations of this transition are immense, particularly regarding the hazard of misinformation and the redefinition of professional responsibility. As machines move from taking notes to offering direct clinical suggestions, the traditional boundaries of informed consent and liability become blurred. Researchers emphasize that the primary goal of integrating these tools must be to sharpen human skills rather than replace them. Ensuring that any AI-generated advice is vetted by a trained professional before it reaches the patient remains a cornerstone of the framework’s ethical guidelines, maintaining the clinician as the primary guardian of the therapeutic relationship and the safety of the individual in care.

Implementing a Responsible Roadmap for Integration

For healthcare organizations and practitioners looking to adopt these technologies, the researchers proposed a pragmatic approach that prioritized safety over novelty. They recommended that systems start with “light” automation, focusing on Category B and Category C applications like automated note-taking and feedback tools. These initial steps allowed clinicians to experience the benefits of machine assistance without introducing the volatility of autonomous interaction. By maintaining a “human-in-the-loop” philosophy, the framework ensured that the clinician remained the primary delivery vehicle for care, using AI-generated data to refine their own empathetic interventions and professional judgment.

The implementation strategy also emphasized the necessity of using AI specifically to enhance evidence-based training, utilizing the speed of machine processing to provide therapists with the data they needed to improve. The research team successfully established a clear vocabulary that allowed regulatory bodies and patients to distinguish between low-risk scripted tools and high-risk autonomous agents. Ultimately, the framework served as a foundational document for a new era of mental health care, where the integration of technology was managed through rigorous testing and ethical oversight. This transition focused on empowering the human therapist, ensuring that the future of the field remained rooted in professional expertise and patient-centered safety.