The silent patterns buried within a patient’s medical history often tell a story that they are not yet ready to voice, creating a critical lag between the onset of abuse and professional intervention. For decades, the medical community has operated on a reactive basis, waiting for physical evidence or a direct disclosure before triggering support systems. However, the emergence of specialized artificial intelligence, developed through a partnership between Mass General Brigham and MIT, is fundamentally rewriting this clinical script. This technology moves beyond simple keyword matching to perform deep, longitudinal analysis of Electronic Medical Records (EMRs), identifying risks of Intimate Partner Violence (IPV) long before traditional methods. By turning fragmented data into a coherent risk profile, it offers a “window of opportunity” that has previously been invisible to even the most observant clinicians.

Identifying Risk Through Machine Learning: An Introduction

This AI-driven approach represents a departure from the “wait-and-see” model of social welfare and clinical diagnostics. Rather than acting as a simple diagnostic aid for a specific ailment, the system functions as a preventative sentinel. It analyzes years of historical data to find the subtle, compounding indicators of abuse that are often dismissed as isolated incidents when viewed in a single visit. This shift is significant because it treats behavioral and social crises with the same technical rigor as chronic diseases like diabetes or heart failure. By identifying patterns up to four years before a formal disclosure, the technology provides a buffer that can literally mean the difference between life and death for vulnerable individuals.

The innovation lies in its ability to bridge the gap between a patient’s silent reality and a provider’s clinical awareness. In a busy emergency department or primary care setting, physicians rarely have the luxury of reviewing a decade’s worth of notes to spot a trend in unexplained bruising or frequent “accidents.” This AI automates that synthesis, flagging high-risk cases for trauma-informed conversations. It acknowledges that IPV is not a single event but a progressive cycle, and by identifying the early stages of that cycle, it empowers healthcare systems to become active participants in domestic violence prevention rather than just treating the resulting trauma.

The Technological Framework: Multi-Model Architectures

The Tabular Model for Structured Data Analysis

At the foundation of this system is the Tabular Model, which processes objective, structured data points that are consistently recorded in every medical file. This model does not look for “smoking guns”; instead, it aggregates diagnostic codes, medication history, and the Social Deprivation Index (SDI). The inclusion of the SDI is particularly clever, as it allows the AI to contextualize a patient’s health within their socioeconomic environment, acknowledging that factors like housing instability or financial stress often intersect with domestic abuse. By analyzing how certain health conditions correlate with these socioeconomic hurdles, the model flags risk factors that would appear statistically insignificant on an individual basis but become highly predictive at scale.

This structured analysis is vital for establishing a baseline of objective risk. It identifies, for instance, a higher frequency of emergency department visits or a reliance on specific pain management medications that may suggest an underlying, unaddressed trauma. Unlike human intuition, which can be clouded by personal biases or varying levels of experience, the Tabular Model remains strictly data-driven. It provides a standardized layer of screening that ensures every patient, regardless of their background or the physician they see, is evaluated against the same rigorous set of historical metrics.

The Notes Model for Natural Language Processing

While structured data provides the “what,” the Notes Model provides the “why” and “how.” This component utilizes advanced natural language processing to navigate the unstructured narratives found in radiology summaries, physician observations, and nursing reports. In many IPV cases, the most telling information is hidden in the prose of a clinical note—a patient’s hesitant demeanor, an inconsistent explanation for an injury, or a partner’s refusal to leave the room. The Notes Model is designed to detect these linguistic nuances and behavioral red flags that are rarely captured in a standardized check-box or a diagnostic code.

This capability is essential because clinical narratives are often the only places where the social complexity of a patient’s life is documented. By scanning for specific descriptors or recurring themes of “unspecified trauma” or “recurrent domestic accidents,” the model can identify hidden indicators of abuse that would otherwise be lost in the vast sea of digital paperwork. It effectively gives the AI “ears,” allowing it to listen to the subtext of a medical visit and alert providers when the documented story doesn’t quite align with the physical evidence or the patient’s history.

The Holistic AI in Medicine Fusion Model

The HAIM Fusion Model is where the true technological leap occurs, as it synthesizes the findings from both structured and unstructured data streams. This multi-model architecture creates a three-dimensional profile of the patient, mirroring the logic of a veteran clinician who considers both lab results and the patient’s personal story. By fusing the Tabular and Notes models, HAIM achieves a level of accuracy—approximately 88%—that neither could reach in isolation. This synergy is crucial because it reduces the noise inherent in clinical data, ensuring that a high-risk flag is based on a comprehensive view of the patient’s life rather than a single outlier.

What makes HAIM unique is its ability to handle “data incompleteness,” a common issue in medical records where some files might lack detailed notes while others are missing specific codes. The fusion approach allows the system to remain effective even when some information is absent, as it can weight the available data more heavily to reach a conclusion. This robustness makes it a practical tool for real-world hospital environments where data is often messy, fragmented, or entered by multiple providers across different departments over several years.

Emerging Trends in Clinical Predictive Analytics

The current trajectory of medical AI is moving away from snapshot diagnostics toward longitudinal predictive modeling. We are seeing a transition where the focus is no longer just on what is happening now, but on the historical trajectory of the patient. This “holistic diagnostics” trend integrates social determinants of health directly into the clinical risk assessment, acknowledging that a patient’s zip code or employment status can be as predictive of their health outcomes as their genetic markers. This AI is a prime example of this shift, as it bridges the gap between social services and clinical medicine.

Moreover, there is a growing emphasis on “interpretability” in AI—the ability for a system not just to give a result, but to explain why it reached that conclusion. In the context of IPV, this means the AI doesn’t just flag a patient; it points the clinician toward the specific patterns or notes that triggered the alert. This trend toward transparent AI is vital for building trust within the medical community, ensuring that technology acts as a partner to the physician rather than a “black box” that replaces human judgment.

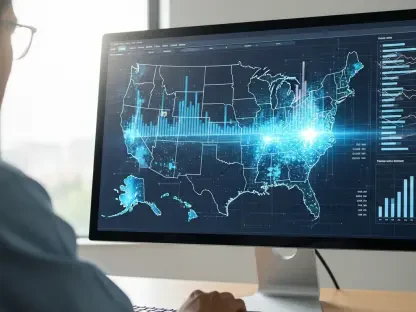

Real-World Applications and Collaborative Implementations

The most impactful application of this technology is currently within emergency departments and trauma centers, where the pressure for quick decisions often leads to missed social cues. By integrating these AI alerts directly into existing EMR systems, hospitals can automate the screening process for every patient who walks through the door. This has led to the identification of victims who were presenting with secondary symptoms—such as chronic migraines or unexplained abdominal pain—rather than acute injuries. Identifying these patients allows for a more comprehensive treatment plan that addresses the root cause of their distress rather than just the physical symptoms.

Collaboration between technologists and social workers has been a hallmark of successful implementations. When the AI flags a patient, it does not simply issue a report; it prompts a specific clinical pathway that includes a trauma-informed interview and a consult with a domestic violence advocate. This collaborative framework ensures that the technology is not an end in itself but a catalyst for a broader, human-centered response. Notable hospital systems have reported that this proactive identification significantly reduces the time between the first “red flag” visit and the patient receiving the resources they need to find safety.

Technical Obstacles and Regulatory Challenges

Despite its success, the technology faces significant hurdles, primarily regarding data integrity and the risk of bias. Because IPV is historically underreported, the “control groups” used to train these models likely contain individuals who were experiencing abuse but never disclosed it. This can lead to “false negatives,” where the AI learns to associate certain patterns of abuse with “normal” patient behavior. Furthermore, there is the persistent challenge of ensuring the model remains effective across diverse demographic and geographic populations, as the presentation of IPV can vary significantly based on cultural factors and socioeconomic environments.

Ethical and regulatory concerns also loom large. Handling such sensitive data requires rigorous privacy protections to ensure that the AI’s findings are never accessible to anyone outside the authorized clinical team—especially the aggressor. There is also the delicate balance of patient autonomy; a physician must navigate the ethics of using an AI to “out” a situation that the patient may not be ready to address. Ongoing development is focusing on refining these ethical guardrails and expanding datasets to include a wider variety of patient backgrounds to minimize algorithmic bias.

The Future of Proactive Intervention Technology

The evolution of IPV AI suggests a future where early-warning systems are standard across all branches of medicine. We may soon see real-time analysis during patient intake, where the AI processes information as it is being entered, providing immediate guidance to the triage nurse. There is also potential for integrating data from wearable devices, which could monitor physiological markers of chronic stress or trauma, providing a more continuous stream of data than occasional hospital visits. These advancements would move the medical community closer to a truly preventative model of care.

Long-term, this technology could expand to identify other forms of “silent” trauma, such as elder abuse or child neglect, using similar multi-model architectures. As the datasets grow and the algorithms become more sophisticated, the focus will likely shift from identification to personalized intervention strategies. The ultimate goal is to create a healthcare environment where no patient’s suffering goes unnoticed, and where the “cycle of silence” is broken by the intelligent application of data.

Assessment of the Current State of IPV AI

The development of the HAIM model and its predecessors was a watershed moment for the intersection of machine learning and social welfare. By successfully predicting risk years in advance of traditional disclosure, the researchers demonstrated that the medical record contains a wealth of untapped, life-saving intelligence. The technology proved that identifying IPV is not just about spotting an injury, but about understanding a complex, longitudinal pattern of medical and social interactions. It shifted the clinical perspective, showing that seemingly unrelated issues like chronic pain and mental health struggles are often the early markers of domestic trauma.

Future efforts will likely focus on implementing these models in smaller community clinics and rural healthcare settings where specialized resources are scarce. The success of this AI in a high-density clinical environment provided the proof of concept needed to justify broader deployment. Moving forward, the priority must remain on refining the ethical implementation of these tools, ensuring that they empower patients rather than simply labeling them. The transition from reactive treatment to proactive intervention was a necessary evolution in modern medicine, and this technology was the primary catalyst for that change.