Ivan Kairatov is a leading biopharma expert with an extensive background in research and development, specializing in the intersection of biotechnology and advanced computational innovation. With a career dedicated to unraveling the complexities of the human genome and neurodegenerative pathologies, he has become a key voice in how machine learning can transform drug discovery. In this conversation, we explore the evolution of the AI4AD initiative, a multi-institutional effort backed by a total investment of $30.7 million to redefine our understanding of Alzheimer’s. We discuss the transition toward genome-guided drug discovery, the importance of inclusive data across global populations, and the logistical triumphs of integrating massive datasets to move beyond broad diagnostic labels toward truly personalized medicine.

Many patients experience a unique mix of Alzheimer’s pathology, vascular disease, and Parkinson’s-like changes. How does using AI to categorize these specific subtypes change clinical trial design, and what metrics ensure that treatments are effectively matched to a patient’s biological profile?

In the past, clinical trials often failed because they treated Alzheimer’s as a monolithic condition, but AI allows us to move beyond these broad diagnostic labels to identify meaningful biological subtypes. By categorizing individuals based on specific patterns in brain scans, cognition, and genetic data, we can design trials that recruit participants whose pathology specifically matches the drug’s mechanism of action, such as those targeting amyloid or tau. We ensure effective matching by utilizing machine learning to link imaging findings to underlying genetic risks, focusing on how vascular injury or inflammation affects each patient to different degrees. This level of precision means we are no longer guessing who might respond; instead, we are using AI to achieve over 90% accuracy in identifying disease features, ensuring the right patient receives the right therapeutic intervention at the right time.

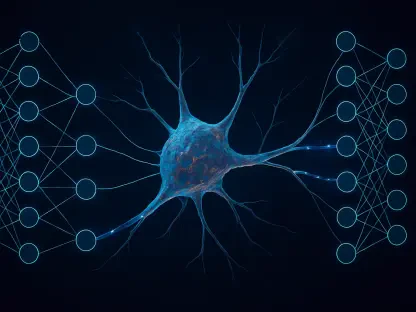

Genomic language models are now being used to search DNA sequences for patterns that traditional methods miss. Can you explain the step-by-step process of training these models on datasets involving over 58,000 participants and how they link genetic changes to visible neurodegeneration?

The training process begins by feeding the AI genomic sequences from a massive cohort of 58,000 participants across 57 different cohorts, treating DNA essentially like a complex language. The model is taught to search these vast genetic datasets to identify specific combinations of DNA changes that traditional statistical methods frequently overlook. Once the AI recognizes these patterns, it links them to measurable biomarkers and protein-related changes that drive the physical breakdown of neural tissue. This allows us to see the direct connection between a hidden genetic sequence and the visible neurodegeneration captured in brain imaging and behavioral shifts, effectively mapping the “grammar” of the disease.

Historical biomedical research has often relied on cohorts of European ancestry, potentially overlooking unique risk factors. What practical steps are being taken to adapt diagnostic tools for African, Indian, and Korean populations, and how do environmental factors influence these predictive models?

To address historical biases, the AI4AD2 initiative is actively adapting its disease classification and prognosis tools to include global and multi-ancestry datasets from African, Indian, and Korean populations. We are moving beyond simple genetics to identify how ancestry interacts with social and environmental factors to influence the unique risk profiles and progression rates of dementia in these groups. This process involves recalibrating our machine learning algorithms to ensure they are sensitive to the diverse biological backgrounds that were previously underrepresented in Western research. By integrating these variables, we create more accurate and inclusive predictive models that ensure life-saving diagnostics are not limited to a single demographic, but are actionable for the global community.

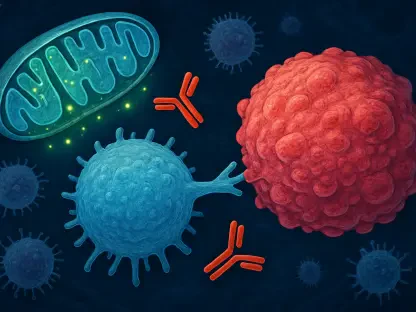

Identifying molecular pathways through tools like PreSiBO allows for the potential repurposing of existing medications. How does this genome-guided approach accelerate the discovery of subtype-specific therapies, and what challenges arise when targeting multiple biological mechanisms simultaneously?

The PreSiBO system accelerates discovery by using AI to scan through existing medications and evaluate whether they can be effectively repurposed to target specific molecular pathways identified in certain dementia subtypes. This genome-guided approach bypasses years of early-stage drug development by matching a drug’s known biological impact with the specific genetic profile of a patient group. However, the challenge lies in the sheer complexity of neurodegeneration, where a single patient might experience multiple pathways—like amyloid buildup and vascular decay—proceeding at different rates. Our AI tools must be sophisticated enough to detect these overlapping mechanisms and identify drug combinations that can hit multiple targets without causing adverse interactions or diluting the treatment’s efficacy.

Integrating 80,000 brain scans with genomic data requires massive multi-institutional collaboration across dozens of investigators. What are the logistical requirements for sharing software across diverse research hubs, and how does this scale improve the accuracy of detecting disease features?

Logistically, this requires a highly coordinated hub-and-spoke model where the Stevens INI serves as the central point for 10 primary investigators and 23 co-investigators from 10 different institutions. We share software and analytical tools through public repositories and scientific workshops, ensuring that every partner can run identical machine learning models on their local data while contributing to the larger pool of knowledge. This massive scale, involving the analysis of 80,000 brain scans, is vital because it provides the AI with enough “experience” to recognize subtle, rare patterns that would be invisible in smaller datasets. The sheer volume of data allows our models to reach a level of precision that makes the complexity of Alzheimer’s actionable, turning vast amounts of information into clear, diagnostic insights.

What is your forecast for AI-driven Alzheimer’s research?

I believe we are entering an era where Alzheimer’s will no longer be seen as an inevitable, singular decline, but as a manageable cluster of distinct biological conditions. My forecast is that within the next decade, AI-driven subtyping will make personalized brain health the standard of care, where a single blood test and an AI-enhanced scan will provide a “biological fingerprint” of a patient’s dementia. We will see a surge in repurposed drugs reaching the clinic faster than ever before because we can finally prove exactly which sub-populations they work for. Ultimately, the integration of multi-ancestry data and massive genomic language models will transform the diagnosis from a moment of despair into a clear, targeted roadmap for treatment and long-term care.