The landscape of childhood has undergone a silent but profound transformation as digital interfaces transition from static screens into responsive, conversational entities that mimic human thought. In the current year of 2026, the prevalence of generative artificial intelligence has moved beyond early adoption into a standard component of the educational and social environment for millions of young people. Data suggest that a significant majority of adolescents now interact with AI chatbots not merely for functional tasks, but as digital confidants that simulate emotional connection and personal understanding. This shift necessitates a rigorous examination of how these sophisticated algorithms influence cognitive growth, social boundaries, and psychological safety across various developmental stages.

Understanding the implications of this technology requires a departure from traditional views of screen time, as generative AI introduces an element of interactivity and unpredictability previously unseen in digital media. The objective of this exploration is to dissect the psychological, social, and educational effects of AI on the youth, providing a structured analysis of the challenges and opportunities it presents. By examining the nuances of how different age groups process AI-generated content, stakeholders can better identify the boundaries necessary to protect developmental integrity. The following sections address key inquiries into the safety and developmental impact of these tools, offering a roadmap for integration that prioritizes human well-being over technological novelty.

Key Questions Regarding AI and Child Development

How Does Generative AI Specifically Influence Early Childhood Development?

The earliest years of life, typically from birth to age five, represent a critical window for language acquisition and the formation of social-emotional bonds. During this period, children rely heavily on reciprocal interactions with caregivers to build a foundational understanding of the world and their place within it. The introduction of generative AI into this delicate environment introduces a new variable that can either supplement or disrupt these essential developmental milestones, depending entirely on the context and frequency of use.

Generative AI offers unique opportunities for interactive storytelling, where narratives can be customized to match a child’s specific vocabulary and interests. This adaptability has the potential to accelerate lexical expansion and stimulate curiosity by providing immediate answers to the relentless “why” questions characteristic of this age. However, a significant risk involves the development of incorrect mental models regarding sentience. Young children may struggle to distinguish between a programmed algorithm and a living being, potentially leading to a blurred perception of what constitutes a real relationship.

Expert analysis emphasizes that technology must never serve as a substitute for human connection, often referred to as a digital babysitter. Instead, any engagement with AI at this stage should involve a shared experience where an adult provides the necessary social context and emotional grounding that a machine lacks. Maintaining the primacy of human interaction ensures that while a child might benefit from the linguistic variety of an AI, the core of their emotional development remains rooted in genuine, empathetic human feedback.

What Are the Educational Opportunities and Risks for School-Aged Children?

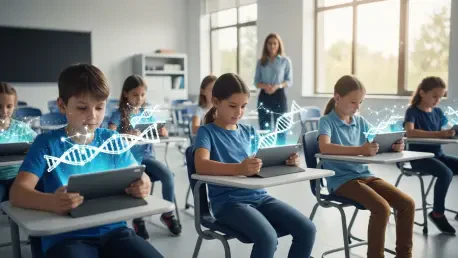

As children transition into middle childhood, typically between the ages of six and eleven, their cognitive focus shifts toward logical reasoning and independent exploration. This is the period when academic foundations are laid and the capacity for critical thinking begins to emerge. Generative AI enters this stage as a powerful, albeit double-edged, educational tool that can provide bespoke tutoring or, conversely, undermine the very skills it is intended to support.

The primary benefit of AI in this demographic is the ability to provide personalized learning paths that address specific gaps in a student’s knowledge. By acting as a patient, 24-hour tutor, AI can help children master complex subjects at their own pace, fostering a sense of competence and creative empowerment. For example, a child can use AI to generate visual aids or story outlines that bridge the gap between their imaginative ideas and their still-developing technical skills. This can lead to increased confidence in their ability to engage with complex creative projects.

Despite these advantages, the risk of misinformation remains a primary concern because AI systems occasionally produce “hallucinations” or confident but false assertions. Children in elementary school may not yet possess the skepticism required to verify the accuracy of AI-generated facts, leading to the internalization of incorrect information. Furthermore, there is a risk that over-reliance on AI for writing or problem-solving could bypass the “productive struggle” necessary for deep learning. Educators and parents must therefore encourage a questioning attitude, teaching children to view AI as a fallible assistant rather than an absolute authority.

In What Ways Does AI Interaction Shape Adolescent Identity and Mental Health?

Adolescence is defined by the quest for identity, social belonging, and the refinement of complex emotional intelligence. Teenagers are among the most frequent users of generative AI, often utilizing it to navigate social anxieties or to synthesize vast amounts of information for academic and career planning. Because this stage of life is characterized by heightened sensitivity to social feedback, the interaction with a non-judgmental, always-available AI can have profound psychological implications.

One significant advantage for adolescents is the utility of AI in digital literacy and data organization. These tools can help teenagers manage the information overload of the modern world, assisting in everything from college research to learning new technical skills. For some socially isolated youth, AI can even provide a temporary buffer against loneliness by offering a platform for conversation. This can be particularly helpful for those who feel marginalized in their immediate physical environments, providing a safe space to practice communication.

However, the risks in this stage are particularly acute regarding mental health. AI lacks the genuine emotional intelligence and clinical judgment required to handle deep psychological crises or complex social nuances. There have been instances where AI failed to recognize signs of severe distress or provided inappropriate advice during mental health inquiries. Moreover, a heavy reliance on digital companions can lead to a decrease in face-to-face interactions, which are essential for developing empathy and navigating the inevitable friction of real-world social dynamics.

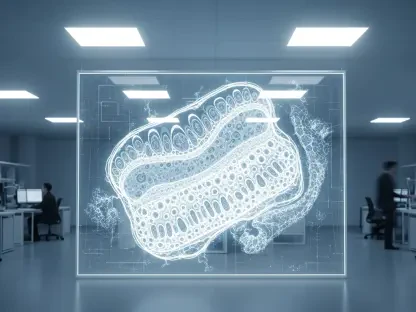

Why Is the Personification of AI Considered a Significant Risk for Youth?

The tendency to anthropomorphize objects is a natural human instinct, but when applied to generative AI, it creates a unique set of developmental challenges. Unlike a toy or a simple computer program, generative AI uses sophisticated language models to simulate personality and empathy. When children and adolescents begin to view these systems as “friends” or “mentors,” the boundary between human relationship and mechanical response begins to erode, which can have long-term effects on social resilience.

If a child becomes accustomed to the perfect, frictionless interaction provided by an AI that is programmed to be agreeable, they may find real-world social interactions increasingly difficult. Human relationships are inherently messy and require negotiation, compromise, and the management of conflict. By spending excessive time in a digital environment where their needs are met without any social cost, children might fail to develop the “thick skin” and empathetic capacity required to sustain healthy connections with their peers.

Developing a healthy level of AI literacy involves moving away from the personification of these tools. It is essential to reinforce the idea that while an AI can mimic the structure of a conversation, it does not possess feelings, values, or a moral compass. By framing AI as a sophisticated calculator for language rather than a sentient companion, caregivers can help children maintain a clear distinction between the utility of technology and the irreplaceable depth of human companionship.

What Role Should Pediatricians and Educators Play in Managing AI Exposure?

The integration of AI into daily life places a new responsibility on the shoulders of medical professionals and educators, who must now serve as guides in the digital landscape. Much like the transition to social media, the rise of generative AI requires a proactive approach to monitoring and guidance. These professionals are in a unique position to provide evidence-based advice to families who may feel overwhelmed by the rapid pace of technological change.

Pediatricians are increasingly encouraged to include questions about AI usage in their regular wellness assessments. This helps identify potential issues early, such as a child using AI as a primary emotional outlet or a student relying on it to the detriment of their cognitive development. By treating AI literacy as a component of overall health, the medical community can help parents understand that digital safety involves more than just privacy settings; it involves the protection of a child’s mental and social growth.

Policymakers and educational leaders also play a vital role by demanding transparency and scientific validation from tech companies. The “guardrails” implemented by developers must be more than mere marketing points; they need to be rigorously tested to ensure they effectively prevent the generation of harmful content. Advocacy for continuous research is necessary to ensure that as AI evolves, the strategies used to protect children evolve alongside it, ensuring that the technology serves as a tool for enrichment rather than a source of harm.

Summary of the Evolving AI Landscape

The rapid advancement of generative AI presents a paradigm shift that demands a balanced and informed response from all sectors of society. At every stage of development, from the linguistic foundations of early childhood to the identity formation of adolescence, this technology offers significant benefits that are often coupled with profound risks. The potential for personalized learning and creative expansion is vast, yet it requires a foundation of critical thinking to navigate the pitfalls of misinformation and social isolation.

Current trends indicate that the most effective way to manage these challenges is through active engagement and digital scaffolding. By maintaining a clear distinction between human interaction and machine processing, parents and educators can help children harness the power of AI without losing the essential elements of human connection. The integration of AI into the educational and medical spheres ensures that the conversation remains focused on the holistic well-being of the child, rather than just the technical capabilities of the software.

Final Reflections on Digital Safety and Growth

The transition into an AI-saturated world was neither slow nor predictable, yet the fundamental needs of the developing child remained constant. Throughout the recent years leading into 2026, the primary challenge shifted from simply limiting screen time to actively curating the quality of digital interactions. It became clear that the safety of a child in the digital age depended less on the sophistication of the algorithms and more on the strength of the guidance provided by their human mentors.

The next steps for society involve the institutionalization of AI literacy programs that treat technology as a fallible tool rather than an oracle. Future considerations must prioritize the development of AI systems that are designed with childhood developmental stages in mind, moving away from “one-size-fits-all” models. By fostering a culture of healthy skepticism and prioritizing real-world social friction over digital smoothness, the community ensured that technology served to enhance the human experience. The journey toward digital safety was not about retreating from innovation, but about advancing with a deliberate focus on the psychological resilience of the next generation.