The rapid evolution of molecular diagnostics has placed the promise of truly personalized healthcare within our reach, yet a significant disconnect remains between laboratory breakthroughs and the actual data available to bedside clinicians. Precision medicine relies on the seamless integration of an individual’s genetic profile into their long-term treatment plan, but recent findings by Pankhuri Gupta, a research genetic counselor at the University of Washington, suggest that the digital infrastructure supporting this vision is fundamentally fractured. When genetic data is recorded, it often remains static within an electronic health record, even as the scientific understanding of that data shifts globally. This creates a state of “imprecision medicine” where clinical decisions are guided by obsolete interpretations, potentially leading to missed diagnoses or unnecessary medical interventions. As the healthcare industry moves deeper into 2026, the challenge is no longer just about sequencing DNA, but about ensuring that the resulting information remains accurate and actionable over the course of a patient’s entire life.

The Ambiguity of Variants: Navigating Clinical Uncertainty

The most pervasive hurdle in modern genomic reporting is the prevalence of Variants of Uncertain Significance, commonly referred to as VUS. A VUS represents a genetic alteration where the current body of scientific evidence is insufficient to determine whether the change is benign or disease-causing. For a clinician, receiving a VUS result is often more complicated than receiving a definitive positive or negative finding, as it provides no clear direction for medical management. Patients may find themselves in a state of clinical limbo, knowing they possess a genetic “typo” but having no way to know if that typo increases their risk for cancer, heart disease, or rare neurological conditions. This ambiguity effectively stalls the diagnostic process and can lead to significant psychological distress for families who were hoping that genetic testing would finally provide the answers they have been seeking for years.

Furthermore, the management of these uncertain variants is complicated by the fact that scientific knowledge is not a fixed destination but a constantly moving target. A variant that is labeled as uncertain today might be clarified tomorrow as researchers aggregate more data from diverse global populations. However, the mechanism for translating these global updates into individual patient care is largely manual and highly inconsistent. Because there is no automated system to alert a doctor when a VUS in a patient’s file has been reclassified by the broader scientific community, many individuals continue to live with an “uncertain” label long after the mystery has been solved in the research world. This gap underscores a systemic failure to treat genomic data as a living document, highlighting a need for a more dynamic relationship between the diagnostic laboratory, the clinical repository, and the healthcare provider.

Systematic Lags: The Discrepancy in Medical Documentation

Recent investigations into large-scale genomic databases, such as the Brotman Baty Institute Clinical Variant Database, have exposed a concerning “systemic lag” in how genetic information is updated within Electronic Health Records. Research comparing patient files against centralized authoritative archives like the National Institutes of Health’s ClinVar found that at least 1.6% of variant classifications in clinical use were outdated. While this percentage may appear statistically minor, its real-world impact is massive when extrapolated across the millions of individuals currently undergoing genomic screening. For a patient whose variant has been reclassified from “uncertain” to “pathogenic,” a delay in reporting can mean the difference between early detection of a life-threatening condition and a late-stage diagnosis. Conversely, failing to downgrade a variant to “benign” can lead to invasive, expensive, and entirely unnecessary surgeries that do nothing to improve the patient’s health outcomes.

The persistence of these reporting gaps is largely due to the way current healthcare software is architected, as most systems treat a genetic test result as a PDF-style snapshot rather than a data-rich, queryable field. Once a report is uploaded to a patient’s chart, it rarely undergoes a proactive review unless a clinician specifically orders a re-interpretation, which is a time-consuming and often uncompensated process. This creates a scenario where the laboratory may have the updated information, and the scientific community may have reached a consensus, but the primary care physician or specialist remains entirely unaware of the change. To resolve this, the medical community must transition toward a model of interoperability where EHRs are digitally linked to live genomic databases, allowing for automated flags and notifications whenever a variant classification changes, thereby ensuring that medical records reflect the most current state of science.

Technological Solutions: Long-Read Sequencing and Functional Data

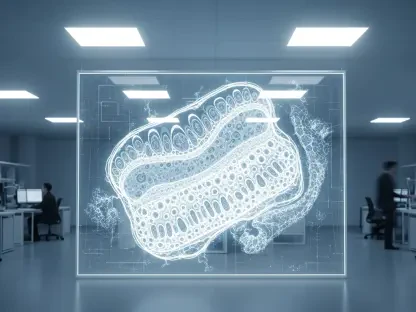

To overcome the inherent limitations of traditional genomic analysis, researchers are increasingly adopting long-read sequencing technologies that offer a far more comprehensive view of the human genome. Traditional short-read sequencing, while cost-effective and widely available, often fails to accurately map complex, repetitive, or structurally diverse regions of DNA. These “blind spots” are frequently where pathogenic variants hide, contributing to the high volume of VUS results. Long-read sequencing bypasses these hurdles by capturing much longer stretches of DNA in a single pass, allowing scientists to see the full context of a genetic alteration. This technological leap is particularly vital for diagnosing rare pediatric disorders, where families often endure years-long “diagnostic odysseys” because standard testing methods simply lack the resolution to identify the underlying cause of a child’s symptoms.

In addition to improved sequencing depth, the integration of functional datasets is revolutionizing how variants are interpreted and eventually reclassified. Instead of relying solely on statistical correlations or predictive algorithms to guess what a variant does, functional studies involve laboratory experiments that test the biological impact of a specific mutation on actual cell function. By observing how a variant affects protein production or cellular signaling in a controlled environment, researchers can provide definitive biological proof of whether a mutation is harmful or harmless. This move toward evidence-based variant interpretation reduces the reliance on “uncertain” labels and provides clinicians with the concrete data needed to make high-stakes medical decisions. As these functional experiments become more standardized and integrated into diagnostic pipelines, the speed and accuracy of variant reclassification will increase.

Redefining Infrastructure: The Shift to Dynamic Genomic Repositories

The architectural design of the modern healthcare ecosystem remains one of the most significant barriers to the effective implementation of precision medicine. Most hospital systems operate using siloed databases that do not communicate effectively with external research archives, creating a one-way flow of information that ends once a test result is delivered. For genomic medicine to be truly successful, the infrastructure must evolve into a dynamic repository that allows for two-way communication between the clinical environment and the research laboratory. This requires a fundamental shift in how health information technology is developed, prioritizing the creation of standardized data formats that can be easily updated as new scientific evidence emerges. Without this digital evolution, the “last mile” of precision medicine will remain a bottleneck that prevents life-saving data from reaching the patients.

Beyond the technical requirements, addressing genomic reporting gaps also necessitates the establishment of clear professional protocols regarding the long-term management of genetic data. Currently, there is significant ambiguity regarding who is responsible for re-contacting a patient when a variant is reclassified years after the initial test was performed. Should the burden fall on the diagnostic laboratory, the ordering physician, or the genetic counselor? This lack of clarity often results in no one taking action, leaving the patient in the dark. Developing institutional policies that define these responsibilities, coupled with legal and ethical frameworks to support them, is essential for maintaining patient safety. Only by aligning technological capabilities with clear procedural accountability can the healthcare industry ensure that every patient receives the full benefit of their genomic information over their entire lifespan.

Professional Evolution: Training for a Data-Driven Future

The successful integration of these advanced genomic workflows depends heavily on the evolution of the genetic counseling profession and the training of the next generation of healthcare providers. As genetic testing becomes more complex, the role of the genetic counselor is expanding from a purely communicative role to one that requires deep proficiency in computational genomics and bioinformatics. Practitioners must now be able to navigate sophisticated databases, interpret complex functional data, and explain the nuances of variant reclassification to both patients and other medical professionals. Educational programs are currently being overhauled to include more robust training in these technical areas, ensuring that the workforce is prepared to handle the massive influx of data generated by 2026-era sequencing technologies and the subsequent analytical challenges.

This professional shift is not just about mastering new software; it is about fostering a culture of continuous learning and data vigilance within the medical community. By leading workshops and developing educational modules on the application of functional evidence, experts in the field are empowering clinicians to move beyond the “snapshot” model of genetics. This proactive approach ensures that the medical workforce remains agile and capable of translating rapid-fire scientific discoveries into tangible improvements in patient care. As these highly trained professionals enter the clinic, they act as the essential bridge between the laboratory and the bedside, ensuring that the promise of precision medicine is not lost in a sea of static data. The focus is shifting toward a fluid model of care where the patient’s genetic story is constantly being updated, refined, and used to optimize their health.

Actionable Steps: Modernizing the Clinical Workflow

The transition toward a fully realized model of precision medicine requires immediate and concrete actions from healthcare administrators, software developers, and clinical practitioners. To begin, health systems should prioritize the implementation of “live” Electronic Health Record interfaces that can subscribe to updates from centralized variant databases like ClinVar. By moving away from static PDF reports and toward discrete, queryable genetic data fields, hospitals can enable automated alerts that flag outdated variant classifications for clinician review. This technological shift must be supported by the development of clear institutional policies that define the “duty to re-contact,” ensuring that there is a documented process for reaching out to patients when new information changes their clinical risk profile.

Furthermore, medical institutions must invest in the ongoing education of their staff to ensure that every provider involved in genetic care understands how to utilize functional data and long-read sequencing results. This includes allocating time and resources for genetic counselors to perform periodic reviews of existing patient files, effectively auditing the “genomic health” of their patient population. For patients, the takeaway is the importance of maintaining a long-term relationship with a genetics team and proactively inquiring about re-interpretations of past test results. As the industry advances, the goal is to create a healthcare environment where genetic data is never considered “finished,” but is instead treated as a vital, evolving asset that grows more valuable and more precise over time. These steps will ensure that the current gaps in genomic reporting were addressed and that the infrastructure was finally aligned with the science.