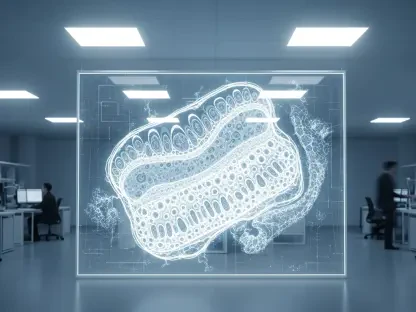

The human gut is no longer viewed as a mere processing plant for nutrients but rather as a sophisticated biochemical engine that dictates the longevity and resilience of the human brain. Recent breakthroughs in medical research have established that the complex communication network between the digestive tract and the central nervous system, often referred to as the gut-brain axis, holds the key to early neurodegenerative detection. As the global population ages and the prevalence of dementia rises toward alarming levels, the demand for non-invasive, accessible, and highly accurate diagnostic tools has never been more urgent. This review examines the shift from traditional, reactive neurology to a proactive, gut-centric approach that utilizes the unique chemical signatures of our internal microbiome to predict cognitive decline years before the first signs of memory loss appear.

The Evolution of Gut-Brain Axis Diagnostic Technology

The emergence of gut-brain axis diagnostics represents a radical departure from the localized focus of 20th-century medicine. Historically, neurology and gastroenterology operated as silos, with the brain and the gut treated as independent systems. However, the discovery of the enteric nervous system—a massive network of neurons lining the digestive tract—revealed a bidirectional highway of information. Early research into this axis focused primarily on how stress or mood influenced digestion. It was not until the mid-2020s that the focus shifted toward how the gut could influence the brain, specifically in the context of neurodegeneration. This evolution was driven by the realization that pathological changes in the brain are often preceded by systemic inflammation and metabolic shifts that originate in the digestive system.

This technology has evolved from a theoretical framework into a precision diagnostic discipline thanks to improvements in high-resolution analytical chemistry and computational biology. In the current technological landscape of 2026, the ability to map the “metabolomic fingerprint” of an individual has become a reality. This shift was necessary because traditional biomarkers for dementia, such as amyloid-beta or tau proteins, are typically only detectable after significant brain damage has occurred. By looking at the gut-brain axis, scientists have found a way to identify the “silent” phase of the disease, where the body is still functioning normally but the chemical precursors of decline are already circulating in the bloodstream.

Core Components and Performance Metrics

Metabolomic Profiling of Gut-Derived Chemicals

The primary engine of this diagnostic technology is the high-throughput metabolomic profiling of chemicals produced by gut microbiota. Unlike genetic sequencing, which identifies which bacteria are present, metabolomics focuses on what those bacteria are actually doing. These microorganisms break down dietary components and produce molecules known as metabolites, which can cross the blood-brain barrier and directly influence neuronal health. The current diagnostic system analyzes a specific panel of approximately 33 molecules that have been identified as key indicators of cognitive status. This approach is unique because it captures the dynamic, real-time interaction between the patient’s diet, their microbiome, and their systemic health, providing a much more nuanced picture than a static genetic test ever could.

Performance metrics for this profiling are rigorous, focusing on the sensitivity and specificity of the chemical detection. The significance of this component lies in its ability to detect “Subjective Memory Lapses” (SML), a state where patients feel a decline that standard clinical tests cannot yet measure. By identifying shifts in specific short-chain fatty acids or amino acid derivatives, the technology can flag an individual’s risk long before cognitive impairment becomes measurable. This is the critical differentiator: while competitors focus on the brain’s structural damage, this technology focuses on the body’s chemical environment. It treats the brain not as an island, but as the recipient of the gut’s biochemical output, allowing for a much earlier window of intervention.

AI-Powered Machine Learning for Pattern Recognition

The sheer volume of data generated by metabolomic profiling would be overwhelming for human analysts, which is why AI-powered machine learning is an indispensable component of the system. These algorithms are trained to recognize complex, non-linear relationships between various metabolite concentrations and cognitive health. Instead of looking for a single “smoking gun” molecule, the AI identifies a signature—a specific combination of several markers that together indicate a high probability of Mild Cognitive Impairment (MCI). This implementation is unique because it uses a refined model that can achieve an accuracy rate of nearly 80 percent by focusing on as few as six key metabolites. This streamlining of data makes the diagnostic process faster, more cost-effective, and easier to integrate into standard laboratory workflows.

From a performance standpoint, the AI’s ability to categorize individuals into distinct groups—healthy, MCI, or SML—represents a massive leap in clinical precision. The machine learning models are designed to minimize false positives while maintaining a high level of sensitivity for those in the earliest stages of concern. This matters for the industry because it provides a scalable way to screen large populations without the need for expensive imaging equipment like PET or MRI scanners. Moreover, the AI continues to learn and refine its predictive capabilities as more data points are added, ensuring that the diagnostic accuracy improves over time. The integration of advanced computer modeling has effectively bridged the gap between raw biological data and actionable clinical insights.

Current Trends in Proactive Neurological Screening

The landscape of neurological care is undergoing a significant transition from a “diagnose and treat” model toward a “predict and prevent” paradigm. One of the most influential trends is the increasing acceptance of non-invasive screening methods among the aging population. People are far more likely to participate in regular health screenings if the process involves a simple blood draw rather than invasive lumbar punctures or lengthy, stressful cognitive exams. This consumer-driven shift is pushing healthcare providers to adopt gut-brain diagnostics as a first-line screening tool. Furthermore, there is a growing trend of “biomarker-led” clinical trials, where pharmaceutical companies use these gut-derived signatures to identify suitable candidates for early-intervention therapies, significantly increasing the likelihood of clinical success.

Another emerging development is the integration of digital health tracking with biochemical diagnostics. Patients in 2026 are increasingly using wearable devices and mobile apps to track their diet and lifestyle, and there is a movement toward syncing this data with their gut-brain profiles. This trend allows clinicians to see how specific dietary changes or probiotic treatments directly affect the metabolite levels associated with cognitive health. This shift in industry behavior is creating a more holistic approach to geriatric medicine, where the focus is not just on the brain, but on the entire ecosystem of the body. The convergence of AI, metabolomics, and consumer health tech is accelerating the trajectory toward a future where dementia is managed long before it becomes a crisis.

Real-World Applications in Clinical Settings

In clinical settings, gut-brain axis diagnostics are being deployed as a powerful triage tool within primary care and geriatric clinics. For instance, in the United Kingdom and parts of Europe, these tests are being integrated into routine annual check-ups for individuals over the age of 50. By establishing a biochemical baseline for each patient, doctors can monitor shifts in the gut-brain axis over several years. If a patient’s profile begins to trend toward a “dementia signature,” they can be prioritized for more intensive monitoring or lifestyle interventions. This application is particularly valuable in rural or underserved areas where access to specialized neurologists and expensive imaging technology is limited, as the samples can be collected locally and sent to centralized labs for AI analysis.

Beyond general screening, this technology is finding unique use cases in personalized nutrition and preventive therapy. Some advanced clinics are now using these diagnostic profiles to prescribe “precision diets” designed to boost the population of beneficial gut bacteria that produce neuroprotective chemicals. For example, if a test reveals a deficiency in specific anti-inflammatory metabolites, the patient can be prescribed a targeted regimen of prebiotics and dietary adjustments to correct the imbalance. This represents a paradigm shift in how we treat the aging brain; instead of waiting for symptoms to appear and prescribing drugs, we are using the gut as a biological factory to produce the very chemicals the brain needs to stay healthy. This proactive implementation is proving to be both more effective and more palatable for patients than traditional pharmacological approaches.

Challenges and Barriers to Widespread Adoption

Despite the impressive performance of gut-brain axis diagnostics, several technical and regulatory hurdles remain. One of the primary technical challenges is the inherent variability of the human microbiome. Diet, geography, ethnicity, and even local water supplies can significantly alter the composition of gut bacteria, making it difficult to establish a single “universal” baseline for a healthy gut-brain axis. Researchers are working to mitigate this by developing localized AI models that account for regional dietary habits, but the complexity of human biology remains a formidable obstacle. Furthermore, the sensitivity of laboratory equipment must be maintained at an extremely high level to detect minute changes in metabolite concentrations, which can be affected by the quality of the sample and the conditions of its transport.

Regulatory and market barriers also present a significant challenge to widespread adoption. While the science is robust, health insurance providers and national health systems are often slow to cover new diagnostic tools until they are backed by decades of longitudinal data. There is also the issue of “diagnostic overshadowing,” where clinicians may be hesitant to rely on a gut-based test when traditional cognitive exams show no issues. Overcoming these barriers requires a shift in the medical education system, moving away from a brain-centric view of dementia toward a more systemic understanding of neurodegeneration. Ongoing development efforts are currently focused on large-scale validation studies to prove the long-term cost-effectiveness of this technology by showing how early detection can reduce the overall financial burden of dementia care.

Future Outlook and the Path to Personalized Prevention

The future of gut-brain axis diagnostics points toward a world where personalized prevention is the standard of care for every aging adult. We are moving toward a period where the “black box” of neurodegeneration is fully illuminated by the biochemical signals of the gut. Potential breakthroughs in the next few years may include the development of at-home testing kits that allow individuals to monitor their gut-brain health with the same ease that they currently monitor their glucose or blood pressure. This level of accessibility would empower individuals to take control of their cognitive longevity, making proactive brain health a part of everyday life. As the database of microbial signatures grows, the AI will become even more precise, perhaps eventually identifying the specific type of dementia a person is at risk for, whether it be Alzheimer’s, vascular dementia, or Lewy body disease.

The long-term impact of this technology on society will likely be measured in the preservation of cognitive function for millions of people. By shifting the timeline of diagnosis back by a decade or more, we are opening a massive window for intervention that simply did not exist before. This could lead to the development of “microbiome-based therapeutics,” such as engineered probiotics designed to produce specific neuroprotective molecules directly in the gut. The industry is moving toward a synthesis of diagnostics and therapeutics, where the test not only identifies the problem but also points directly to the solution. This path to personalized prevention will fundamentally change the experience of aging, transforming dementia from an inevitable decline into a manageable, and perhaps even preventable, condition.

Assessment of Current State and Final Conclusions

The review of gut-brain axis diagnostics revealed a technology that stood at the intersection of biological complexity and computational precision. The University of East Anglia’s research demonstrated that the gut was not merely a digestive organ but a vital diagnostic window into the brain’s future. By utilizing AI to decode the metabolomic signatures of gut bacteria, the scientific community successfully identified early warning signs of cognitive decline with an accuracy that rivaled far more expensive and invasive methods. This approach was found to be particularly effective because it addressed the “silent” phase of neurodegeneration, providing a critical opportunity for intervention that was previously unavailable to clinicians.

The findings showed that the integration of this technology into clinical settings was both feasible and necessary. While challenges regarding microbiome variability and regulatory approval persisted, the trajectory of the field remained overwhelmingly positive. The transition from reactive to proactive medicine was supported by the high accuracy rates of the machine-learning models and the non-invasive nature of the blood tests. Ultimately, the gut-brain axis represented a new frontier in neurology, offering a roadmap for a future where dementia could be detected early and managed through personalized, biochemical interventions. This technology did not just offer a new way to look at the brain; it offered a new way to understand the interconnected nature of human health.