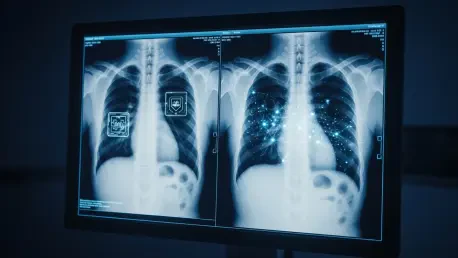

The digital fabrication of human anatomy has progressed from a niche computational experiment into a sophisticated radiological reality that challenges the very foundation of clinical evidence. Synthetic medical imaging, often termed “deepfake” radiology, no longer requires deep expertise in computer science to produce; instead, it leverages the same generative principles that power modern linguistic models to construct anatomically plausible radiographs, CT scans, and MRIs. This shift represents a fundamental change in how the medical community perceives visual data, moving from a paradigm of inherent trust to one of digital skepticism. While the technology offers a robust pathway for enhancing medical education and training artificial intelligence, it simultaneously introduces a set of vulnerabilities that could compromise the integrity of diagnostic workflows and legal medical records.

Introduction: The Advent of Synthetic Clinical Imagery

The landscape of medical imaging is undergoing a profound transformation as generative models transition from specialized Generative Adversarial Networks (GANs) to sophisticated diffusion-based architectures. These modern systems function by iteratively refining Gaussian noise into structured, high-fidelity images that mirror the biological complexity of the human body. Unlike earlier iterations that often produced blurry or anatomically inconsistent results, current iterations can generate imagery from simple natural language prompts, effectively democratizing the creation of synthetic clinical data. This accessibility is the primary driver behind the technology’s rapid proliferation, as it allows researchers to bypass the traditional barriers of data acquisition.

The emergence of these “vision-language” models has bridged the gap between textual descriptions and visual representation, allowing for the creation of specific pathological scenarios on demand. However, this advancement is a double-edged sword. While it provides a solution to the persistent problem of data scarcity in medical research, it also creates a environment where the authenticity of a radiograph can no longer be assumed. The intersection of advanced computer vision and clinical diagnostics has reached a point where synthetic images are frequently indistinguishable from those captured by physical hardware, necessitating a reevaluation of how medical evidence is verified.

Key Components: The Architecture of Medical Image Synthesis

Modern deepfake imaging relies heavily on diffusion models such as RoentGen and advanced Large Language Models like GPT-4o and GPT-5. These architectures are unique because they do not simply copy existing images; they learn the underlying statistical distribution of human anatomy. By understanding the relationship between bone density, soft-tissue texture, and spatial orientation, these models can synthesize entirely new patients who exist only in the digital realm. This capability is significant because it allows for the generation of “edge cases”—rare conditions that are seldom captured in traditional clinical datasets—thereby providing a more comprehensive training ground for both human students and automated diagnostic tools.

The realism achieved by these models is not merely aesthetic but functional. Synthetic images often maintain a high level of “diagnostic accuracy,” meaning that a radiologist can identify specific fractures, tumors, or infections within a fake scan just as they would in a real one. This level of pathological realism is what makes the technology both impressive and dangerous. The implementation is unique compared to traditional simulation software because it captures the “jagged” complexity and subtle noise characteristic of real-world imaging sensors. Consequently, the synthetic output avoids the sterilized look of older computer-generated imagery, successfully crossing the “uncanny valley” that previously signaled a file was fabricated.

Recent Developments: The Evolution of Vision-Language Capabilities

A significant trend in this sector is the shift toward organ-specific models that specialize in high-resolution thoracic or musculoskeletal imaging. These specialized systems are trained on curated datasets to master the nuances of specific biological systems, further enhancing the photorealism of digital fabrications. Furthermore, the development of GPT-5 and similar architectures has introduced a bidirectional capability where models can both generate an image and provide a detailed clinical description of its findings. This closing of the loop between visual and textual data makes the synthetic records appear even more legitimate to the casual observer or an unsuspecting medical professional.

Moreover, a technological “arms race” is currently unfolding between image generation and detection. As synthesis models become more adept at hiding digital artifacts, developers are simultaneously creating AI-based detectors to find the “telltale signs” of fabrication. These signs often include unnatural bone smoothness or a uniform grain that lacks the stochastic noise found in physical captures. However, as generative models are trained against these detectors, the artifacts are being phased out. This iterative cycle of improvement suggests that the window for human-led detection is closing, as even seasoned radiologists now struggle to identify deepfakes with consistent accuracy.

Real-World Applications: Training and Data Augmentation

In the realm of medical education, synthetic imagery serves as a transformative tool for creating vast libraries of rare pathological cases. Traditional education often relies on the serendipity of what patients walk through the hospital doors, which can leave gaps in a student’s exposure to infrequent diseases. Deepfake technology allows institutions to curate specific diagnostic scenarios without compromising patient privacy or violating HIPAA regulations. By providing a diverse array of synthetic cases, educators can ensure that the next generation of clinicians is prepared for the widest possible range of medical conditions.

Beyond education, developers utilize deepfake medical imaging to “balance” the datasets used for training diagnostic algorithms. Most clinical datasets are heavily weighted toward healthy or common cases, which can lead to AI bias or a failure to recognize rare pathologies. By generating synthetic examples of infrequent diseases, researchers can improve the robustness of clinical AI tools. This application is unique because it uses “fake” data to improve the “real” performance of diagnostic software, ensuring that automated systems are capable of handling the complexities of actual patient care with greater precision and fewer false negatives.

Technical Challenges: Detection Limitations and Regulatory Risks

One of the primary hurdles facing the industry is the moderate accuracy of human detection; recent studies indicate that even experienced radiologists only identify synthetic images correctly about 75 percent of the time. The difficulty lies in the fact that as the technology improves, the digital markers of fabrication—such as inconsistent surgical materials or overly symmetric vertebral alignments—are disappearing. This makes human-led verification an increasingly unreliable defense against misinformation. Furthermore, the potential for insurance fraud and the fabrication of clinical trial data poses a significant obstacle to widespread market acceptance, as the financial incentives for misuse are substantial.

Regulatory bodies are currently grappling with the need for mandatory cryptographic watermarking and provenance standards. The challenge is to create a system where a digital file can be traced back to a verified medical capture device through a secure “chain of custody.” Without such standards, the medical field faces a systemic risk where the evidentiary value of imaging is undermined. The market must decide whether to prioritize the convenience of synthetic data generation or the security of clinical evidence, a trade-off that will define the regulatory landscape for years to come.

Future Outlook: The Trajectory of Digital Provenance

The trajectory of deepfake medical imaging points toward a state of total anatomical perfection, where digital variations will be virtually indistinguishable from biological irregularities. In response, the industry is moving toward the integration of automated deepfake detectors directly into Picture Archiving and Communication Systems (PACS). These systems will likely act as a first line of defense, flagging images that lack proper metadata or exhibit suspicious pixel patterns before they ever reach a radiologist’s desk. This shift suggests that in the future, the “provenance” of an image will become as critical to the diagnostic process as the image itself.

Furthermore, breakthroughs in synthetic data may eventually allow for personalized medical simulations. Researchers could potentially use a patient’s actual scans to generate “what-if” scenarios, visualizing the potential progression of a disease or the outcome of a surgical intervention. While this offers exciting possibilities for precision medicine, it will require a rigorous ethical framework to prevent the spread of clinical misinformation. The long-term success of this technology depends on the ability of the medical community to maintain a balance between the creative potential of AI and the absolute necessity of clinical truth.

Summary of Findings and Assessment

The review of deepfake medical imaging revealed a technology that had reached a pivotal level of sophistication. It was observed that the fidelity of synthetic radiographs and scans successfully challenged the perception of even the most experienced medical professionals, rendering human intuition an insufficient defense against sophisticated diffusion models. While the technology offered transformative benefits for medical education and the balancing of AI training datasets, these advantages were shadowed by the significant potential for misuse in fraud and clinical misinformation. The transition from high-barrier coding to accessible prompt-based generation was identified as a primary catalyst for both innovation and risk.

In light of these findings, the industry was tasked with adopting a multi-layered strategy to preserve the integrity of diagnostic medicine. It was concluded that relying on clinician education alone was an inadequate response to the disappearance of digital artifacts. Instead, the necessary next steps involved the implementation of mandatory cryptographic watermarking and the integration of automated AI screening tools within hospital networks. Moving forward, the medical community sought to establish a “zero-trust” architecture for digital files, ensuring that every image could be verified through a secure chain of provenance. These measures were essential to ensure that the continued evolution of generative AI would enhance, rather than undermine, the trustworthiness of modern clinical diagnostics.