The rapid evolution of diagnostic imaging has reached a critical juncture where the sheer volume of data produced by modern medical equipment often exceeds the capacity for manual human analysis. Breast cancer diagnosis relies heavily on Dynamic Contrast-Enhanced Magnetic Resonance Imaging (DCE-MRI), a technique that provides a detailed look at tissue vascularity and metabolic activity through a series of temporal scans. However, the inherent complexity of these images, characterized by extreme variability in tumor morphology and subtle enhancement patterns, presents a significant challenge for radiologists aiming for perfect precision. In response to this need for objective and efficient tools, the Residual-guided Spatiotemporal Transformer Graph fusion enhancement (RST2G) framework emerged to automate the segmentation process. This computational model represents a major leap forward, utilizing sophisticated deep learning to interpret four-dimensional data with a level of accuracy that was previously difficult to achieve in clinical settings by effectively addressing the high degree of tumor heterogeneity across diverse patient populations.

Bridging the Gap Between Time and Space

Harnessing Spatiotemporal Dynamics

The primary innovation of the RST2G framework lies in its fundamental capacity to process DCE-MRI not merely as a collection of static 3D images, but as a cohesive four-dimensional dataset that captures the critical element of time. Traditional segmentation models frequently struggle to interpret the subtle “wash-in” and “wash-out” patterns of contrast agents, which are essential markers for identifying malignant tissue growth and angiogenesis. By treating these temporal changes as primary diagnostic signals, the RST2G system effectively maps the functional behavior of a tumor throughout the entire scanning sequence. This approach allows the algorithm to differentiate between benign glandular tissue and aggressive malignancies that might appear similar in a single static frame. The integration of multi-modal inputs from various scan phases ensures that the model captures the full kinetic profile of the contrast agent, providing a depth of functional insight that surpasses the capabilities of standard spatial-only analysis.

Beyond merely identifying the presence of a lesion, the spatiotemporal focus of this framework enables a more nuanced understanding of internal tumor structures and their blood supply. By analyzing how the contrast agent permeates different regions of the suspicious mass over several minutes, the model can highlight areas of necrosis or high vascular density that are often invisible to the naked eye during initial screenings. This granular level of detail is achieved through a sophisticated alignment process that synchronizes spatial features across multiple time points, ensuring that the movement or physiological changes of the patient during the MRI session do not compromise the final output. Consequently, the framework provides a robust representation of the tumor’s biological signature, which is vital for early intervention and the development of targeted therapy. This methodology effectively bridges the gap between traditional morphology and advanced physiological imaging, setting a new standard for automated oncology tools.

A Fusion of Modern Architectures

At its technical core, the framework utilizes a hybrid architecture that seamlessly combines the localized precision of Convolutional Neural Networks (CNNs) with the expansive global context provided by Transformer modules. This specific component, often referred to as the CFormerEncoder, allows the system to recognize fine-grained textures and sharp edges while simultaneously understanding the long-range relationships across the entire volume of the breast tissue. While CNNs are excellent at detecting the specific boundaries of a tumor, they often lose track of the larger anatomical context; conversely, Transformers excel at global attention but may miss minute details. By fusing these two methodologies, RST2G ensures that neither the small-scale morphological features nor the large-scale spatial orientations are neglected during the segmentation process. This dual-stream processing is crucial for accurately delineating tumors that exhibit irregular shapes or those that are located near complex anatomical structures.

Furthermore, the inclusion of Spatiotemporal Graph Enhancement introduces a non-Euclidean approach to data processing, treating different image regions and time points as interconnected nodes within a graph structure. This allows the model to explicitly preserve and analyze the relationship between spatial structures and their corresponding temporal dynamics throughout the imaging sequence. By mapping these connections, the framework can effectively filter out background noise and focus its computational power on the most relevant features of the malignancy. The implementation of a Residual-guided Multi-Scale Refinement (MSR) module further enhances this process by preventing the loss of critical information during the deep layers of neural network processing. This multi-layered strategy ensures that the final segmentation mask is both accurate in its general placement and precise in its boundary definition, representing a significant technical advancement over previous generations of medical imaging artificial intelligence.

Validating Precision in Complex Scenarios

Surpassing Industry Standards in Accuracy

Empirical testing on major datasets, such as the TCGA-BRCA, has demonstrated that the RST2G framework significantly outperforms existing state-of-the-art models currently utilized in research and clinical pilot programs. With a Dice Similarity Coefficient reaching as high as 80.1%, the system proves its ability to overlap almost perfectly with the actual tumor boundaries identified by senior radiologists. These high-performance metrics are not merely academic milestones; they translate directly into more precise tumor volume measurements, which are essential for clinical decision-making. When a model can accurately quantify the dimensions of a malignancy, healthcare providers can better determine the stage of the disease and select the most appropriate surgical or radiological intervention. The high level of precision reduces the likelihood of overestimating or underestimating the extent of the cancer, which is a frequent complication in manual segmentation due to the “fuzzy” boundaries often found in dense breast tissue.

Moreover, the consistency of these results across different patient cohorts highlights the framework’s reliability in handling the inherent variability of breast cancer presentations. Whether dealing with small, localized lesions or larger, more diffuse masses, the model maintains a high degree of fidelity in its output. This reliability is particularly vital for clinicians who need to monitor whether a malignancy is responding to neoadjuvant chemotherapy. If the automated system detects even a minor decrease in tumor volume or a change in its enhancement pattern over time, it provides objective evidence that a specific treatment strategy is effective. Conversely, a lack of change might prompt an immediate pivot to a different drug or intervention, potentially saving valuable time in a patient’s recovery journey. By providing these quantifiable insights, the framework transforms subjective visual assessments into data-driven clinical protocols that prioritize patient outcomes and medical efficiency.

Ensuring Consistency Across Datasets

Beyond its raw accuracy, the model exhibits remarkable robustness when tested against diverse medical datasets from various international centers, proving its utility in real-world environments. This adaptability suggests that RST2G can effectively handle the diverse “noise” typical of clinical settings, including variations in MRI scanner models, field strengths, and differing imaging protocols. In many instances, an artificial intelligence model trained on data from one hospital fails to perform when applied to data from another due to subtle differences in how the images are captured. However, the RST2G framework was designed with generalization in mind, utilizing its hybrid architecture to ignore hardware-specific artifacts and focus on the universal biological markers of cancer. This cross-platform compatibility is a major hurdle for medical AI, and overcoming it marks a significant step toward the widespread adoption of automated segmentation tools in global healthcare systems.

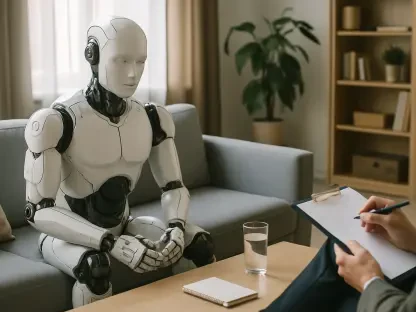

Visual validation through Gradient-weighted Class Activation Mapping (Grad-CAM) further confirms that the AI focuses on the same clinically relevant regions that a human radiologist would prioritize during an exam. This transparency is essential for building trust among medical professionals who must rely on these automated findings for critical diagnoses. By providing a “heat map” of the areas that influenced its decision, the system allows doctors to verify the logic behind the segmentation, ensuring that the model is not being misled by benign artifacts or imaging flaws. This interpretability ensures that the framework acts as a collaborative tool rather than a “black box,” enhancing the radiologist’s expertise rather than replacing it. The ability to maintain such high standards of consistency and transparency across multiple datasets suggests that the framework is ready for the rigors of high-volume clinical practice, where reliability and clarity are the most important factors for any diagnostic technology.

The Path Toward Clinical Integration

Streamlining Hospital Workflows

The practical utility of this framework was highlighted by its impressive computational efficiency, as it proved capable of processing a full MRI volume in approximately 30 seconds using standard GPU hardware. In the high-pressure environment of a modern hospital, this speed allows for near-real-time deployment, which can significantly reduce the administrative and diagnostic workload on medical staff. Instead of spending hours manually tracing the boundaries of complex tumors across dozens of MRI slices, radiologists can now use the automated output as a high-fidelity baseline for their final review. This shift not only accelerates the diagnostic pipeline but also allows specialists to dedicate more of their time to patient consultation and complex case management. The efficiency of the system ensures that it can be integrated into existing radiology software suites without requiring expensive infrastructure upgrades, making it an accessible solution for both large research hospitals and smaller regional clinics.

By providing a consistent and automated baseline for every scan, the system also mitigated the pervasive issue of “inter-observer variability,” which occurs when different experts disagree on the exact boundaries of a tumor. Studies have shown that even highly experienced radiologists may produce slightly different segmentations for the same patient, leading to inconsistencies in treatment planning. The RST2G framework eliminated this subjectivity by applying a rigorous, mathematical approach to every image, ensuring that the same biological features always resulted in the same segmentation output. This standardization is crucial for large-scale clinical trials and long-term patient monitoring, where consistency over several months or years is necessary to track the progression of the disease. Consequently, the framework served as a stabilizing force in the diagnostic process, providing a reliable second opinion that enhanced the overall quality of care while simultaneously speeding up the delivery of results to anxious patients.

Shaping the Future of Personalized Oncology

The development and validation of the RST2G framework signaled a broader shift toward the use of flexible, non-Euclidean data processing in the field of medical artificial intelligence. As the medical community moved closer to the goal of truly personalized medicine, the ability of such models to provide longitudinal monitoring became indispensable. By analyzing the biological behavior of cancer over time through 4D imaging, the system allowed for a deeper understanding of how individual tumors reacted to specific therapeutic agents. This data-driven approach ensured that patient care was based on the unique physiological response of the malignancy rather than on generalized statistics. The successful implementation of graph-based learning in this context opened the door for future iterations to handle even more complex variables, such as irregular temporal sampling or the integration of genetic data into the imaging analysis, further refining the accuracy of automated oncology.

The practical steps forward focused on the seamless integration of these tools into daily diagnostic software used by oncologists and the expansion of the system’s training to even more diverse populations. Researchers worked to ensure that the framework remained accurate regardless of the specific timing intervals between MRI scans, a common real-world challenge that often confounded earlier models. By prioritizing temporal flexibility and multi-center validation, the framework provided a blueprint for how AI could be responsibly deployed in life-saving medical applications. Ultimately, the framework proved that combining spatial context with temporal dynamics was the most effective way to address the complexities of breast cancer imaging. The transition from manual, error-prone segmentation to an automated, high-precision system represented a fundamental improvement in the speed and accuracy of cancer care, establishing a foundation for the next generation of intelligent diagnostic assistants that will continue to evolve alongside clinical needs.