Lukas Hainz sits down with Ivan Kairatov, a distinguished biopharma expert with a deep background in research and development and a keen eye for technological innovation within the pharmaceutical industry. Together, they explore a recent breakthrough from the University of Cambridge that reimagines one of the most fundamental reactions in organic chemistry.

The conversation centers on a newly developed “anti-Friedel–Crafts” reaction that utilizes LED light to facilitate carbon–carbon bond formation at the final stages of drug synthesis. Kairatov provides insights into how this method moves away from harsh, metal-catalyzed processes toward a cleaner, more efficient model. They discuss the role of serendipity in the laboratory, the integration of machine learning to predict molecular reactivity, and the practical implications for environmental sustainability and speed in modern drug development.

Traditional Friedel–Crafts reactions often require harsh conditions and heavy metal catalysts, limiting them to early-stage synthesis. How does using LED light at ambient temperature fundamentally change the reaction mechanism, and what specific “self-sustaining chain processes” allow for these results without toxic reagents?

The shift to LED light fundamentally moves us away from the brute-force approach of traditional synthesis where we rely on high heat and aggressive reagents to force a reaction. In this new “anti-Friedel–Crafts” approach, the light serves as a precise energy source that triggers an electron donor-acceptor photoinitiation. This activation starts a self-sustaining chain process where the molecules themselves propagate the reaction once the initial spark is provided by the lamp. Because this happens at ambient temperature, we avoid the thermal degradation that typically plagues complex molecules, allowing for the formation of 1 or 2 critical bonds without the need for expensive or toxic heavy metal catalysts. It is a more elegant, “natural” way to handle high-energy transformations by letting light do the heavy lifting.

Modifying complex molecules usually involves dismantling and rebuilding structures over several months. Since this new method enables late-stage functionalization, could you describe the step-by-step workflow for a medicinal chemist and explain how “high functional-group tolerance” protects the sensitive regions of a drug?

In a traditional workflow, if a chemist wants to test a minor structural tweak, they might spend 3 to 6 months dismantling a molecule and rebuilding it from the ground up just to incorporate that change. With this light-driven method, the workflow is flipped: the chemist takes the nearly finished “hit” molecule and applies the modification directly as a final step. The “high functional-group tolerance” is the hero here because it acts like a surgical laser, targeting only the specific carbon site we want to change while leaving sensitive regions—like amines or alcohols—completely untouched. This means we no longer have to worry about the reaction “chewing up” the rest of the drug, which is a massive leap forward for late-stage optimization. By performing these precise adjustments under mild conditions, we open up a chemical space that was previously considered too fragile to touch.

Pharmaceutical development is under pressure to reduce energy consumption and chemical waste. Beyond replacing heavy metals, what are the measurable environmental advantages of moving toward continuous-flow systems, and how do these efficiencies impact the overall cost and speed of bringing a new medicine to market?

Moving toward continuous-flow systems allows us to move away from massive, energy-hungry batch reactors and toward a more streamlined, “always-on” production line. This breakthrough is particularly exciting because fewer synthetic steps inherently mean less chemical waste and a significantly smaller environmental footprint for every gram of medicine produced. When you reduce a sequence by even 3 or 4 steps, you are cutting out gallons of hazardous solvents and the energy required to heat and cool those reactions over several days. For a company like AstraZeneca, these efficiencies translate directly into a faster “design-make-test” cycle, potentially shaving months off the development timeline. Lowering the cost of goods and accelerating the path to the clinic is a win-win for both the industry’s bottom line and the planet.

This discovery reportedly stemmed from a failed control experiment where a reaction worked better without the intended catalyst. Could you share an anecdote regarding how such “diamonds in the rough” are identified in a data-heavy lab environment and how researchers distinguish a genuine breakthrough from a simple error?

In a modern lab, we are drowning in data, and it is incredibly easy to look at an “incorrect” result and toss it in the bin as a mistake or a contaminated sample. This discovery happened because the researcher, David Vahey, noticed that the reaction worked better when the catalyst was removed—a result that logically shouldn’t have happened. Instead of dismissing it as a fluke or a “failed” control, he had the professional intuition to stop and ask why the mess in the flask contained the desired product. It takes a certain level of courage to pause a structured project to investigate a “wrong” number, but that is exactly where the most famous discoveries, like penicillin or X-rays, were born. Distinguishing a breakthrough from an error requires that human element of judgment—the ability to see the “diamond” in a pile of failed experiments.

Machine learning was used to predict reactivity on molecules that had never been tested in a physical lab. How do these algorithms bridge the gap between established chemical patterns and unexplored “chemical space,” and what metrics do you use to validate the accuracy of these AI-driven simulations?

The AI models, developed with Trinity College Dublin, act as a bridge by learning the deep electronic patterns of known reactions and then “hallucinating” how those patterns would apply to 100 or 1,000 theoretical molecules. Rather than having a chemist perform endless trial and error at the bench, the algorithm identifies which molecules are most likely to react favorably based on their structural architecture. We validate these simulations by comparing the AI’s predictions against real-world spectroscopic data and yield percentages from the lab. This doesn’t replace the chemist, but it provides a map of the “chemical space,” telling us exactly where to look so we don’t waste time on reactions that are destined to fail. It’s about using technology to narrow the field of possibility to only the most promising candidates.

Collaborations between academic researchers and industrial partners like AstraZeneca help bridge the gap between theory and practice. What are the primary logistical challenges when scaling a light-based reaction for large-scale pharmaceutical production, and how do these systems handle the requirements of highly selective carbon–carbon bond formation?

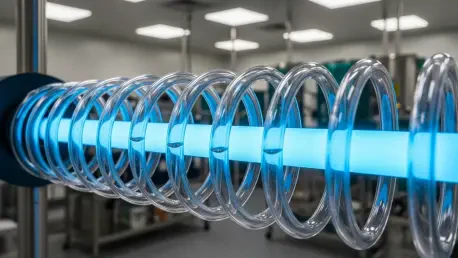

The primary logistical hurdle with light-based chemistry is “photon penetration”—ensuring that every part of a large-scale mixture receives the same amount of light energy as a tiny test tube. In a small lab vial, the LED light reaches everything, but in a 1,000-liter tank, the center stays dark, which is why the move to continuous-flow systems is so critical. By pumping the reaction through thin, transparent tubes wrapped around light sources, we can ensure uniform exposure and maintain the high selectivity required for carbon–carbon bond formation. Industry partners help by providing the engineering expertise to build these flow reactors, ensuring that the precision we see at Cambridge can be replicated on a metric-ton scale. This collaboration ensures that a “pretty” academic reaction can actually survive the rigors of a global manufacturing supply chain.

What is your forecast for the future of sustainable drug synthesis?

I believe we are entering an era of “biomimetic manufacturing,” where the pharmaceutical industry will increasingly abandon the 19th-century reliance on heavy metals and high heat in favor of light-driven and enzyme-based processes. Within the next 10 years, I expect that late-stage functionalization will become the standard operating procedure, allowing us to “edit” drug molecules as easily as we might edit a line of code. This shift will not only make the industry greener but will also unlock a vast library of complex, life-saving molecules that were previously too difficult or too expensive to synthesize. We are moving toward a future where the lab is cleaner, the development cycles are shorter, and the molecules we create are more precise than ever before.