The ability to watch a single protein molecule decide the fate of a living cell has long been the holy grail of molecular biology, yet for decades, researchers remained trapped behind the blurry veil of static imaging. While traditional microscopy could tell us where a protein was located at a specific moment, it failed to capture the kinetic chaos that actually drives genetic expression. Fluctuation spectroscopy has emerged as the definitive bridge between this qualitative past and a quantitative future, transforming how we perceive the movement of transcription factors like the Dorsal/NF-κB family. By analyzing the “noise” or fluctuations in light intensity from fluorescently tagged proteins, this technology allows scientists to calculate exactly how fast molecules move and how often they collide with their genomic targets.

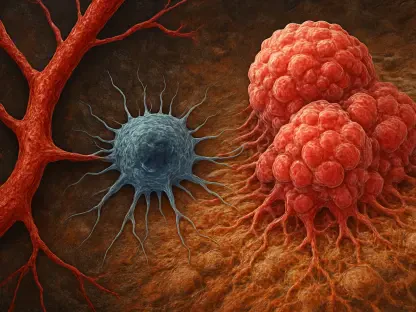

This shift represents a fundamental modernization of biological inquiry, moving away from subjective observations toward rigorous, longitudinal mathematical modeling. In the context of embryonic development and immune response, the stakes for such precision are immense. NF-κB is not just another protein; it is a master regulator that, when mismanaged, leads to the uncontrolled inflammation seen in chronic diseases or the cellular immortality characteristic of oncology. Fluctuation spectroscopy provides the first high-resolution map of these dynamics, allowing researchers to move beyond simply seeing the protein and start measuring its mechanical efficiency within the nucleus.

Introduction to Quantitative Gene Regulation Analysis

At its core, fluctuation spectroscopy operates on the principle that molecular mobility is the primary currency of cellular decision-making. By measuring the rate at which molecules enter and leave a focal volume, the technology provides a window into the biological context of transcription factor dynamics that static images simply cannot offer. Instead of a single “snapshot” of a cell, this method treats the nucleus as a dynamic chemical reactor where the speed of a protein determines its functional state. This quantitative approach is essential because it allows for the creation of predictive models that can simulate how a cell might react to varying environmental stresses or medicinal interventions.

The relevance of this technology in the current scientific landscape cannot be overstated. We are witnessing a transition from descriptive biology to an era of “cell engineering,” where the goal is to manipulate genetic outputs with the same reliability as a software developer tweaks code. By capturing the longitudinal behavior of proteins over time, fluctuation spectroscopy moves the needle toward a more rigorous understanding of the genome. It replaces guesswork with mathematical certainty, ensuring that our models of gene regulation are based on the actual physical behavior of molecules rather than idealized theoretical assumptions.

Core Components and Methodology of Fluctuation Spectroscopy

Velocity-Based Molecular Categorization

One of the most impressive features of fluctuation spectroscopy is its ability to distinguish between different populations of the same protein based solely on their diffusion rates. In a typical nucleus, the Dorsal protein exists in a spectrum of activity. The technology categorizes these into fast-diffusing molecules, which are searching for targets; slow-moving molecules, which may be hindered by local nuclear environments; and immobile, DNA-bound states. This level of granularity is what sets this method apart from standard fluorescence microscopy, which often lumps all labeled proteins into a single, undifferentiated mass.

This categorization matters because it reveals the true “bioavailability” of a transcription factor. If 90% of a protein is moving fast, the cell is effectively in a “standby” mode, whereas a high concentration of immobile proteins signals active genetic transcription. By separating these states, researchers can identify whether a failure in gene expression is due to a lack of protein or a failure of that protein to bind to its target. This distinction is vital for troubleshooting signaling pathway errors, as it narrows down the specific mechanical step where a biological process has gone off the rails.

Long-Duration Longitudinal Imaging

The implementation of extended monitoring intervals represents a significant leap forward in the study of genomic interaction. Historically, imaging was limited by phototoxicity—the tendency of intense light to kill the very cells being studied. Modern fluctuation spectroscopy has overcome these constraints, allowing for stable, nucleus-wide views over significant time scales. This long-term tracking is essential for capturing “bursty” transcription events, where genes are turned on and off in rapid, irregular pulses.

By capturing dynamics across multiple time scales, the technology provides a holistic view of the nucleus that was previously impossible. It allows for the observation of how a protein’s behavior changes as an embryo develops or as an immune cell matures. This longitudinal perspective is unique because it accounts for the “memory” of a cell, showing how past interactions with DNA influence future binding events. This depth of data is what enables the transition from simple observation to the creation of complex, time-dependent mathematical simulations.

Innovations in Mapping Non-Linear Binding Dynamics

Recent developments in the field have shattered the long-held assumption that protein concentration and DNA binding share a linear relationship. Through the use of fluctuation spectroscopy, researchers have discovered that doubling the amount of a protein in the nucleus does not necessarily double the amount of gene activation. Instead, the relationship is deeply non-linear, often governed by threshold-based activation mechanisms. This discovery suggests that cells utilize a “digital” logic—either off or on—rather than an “analog” one, which allows for much sharper boundaries during tissue development.

Furthermore, the emergence of mathematical models that account for molecular clustering has changed our understanding of nuclear organization. Rather than floating freely, proteins often aggregate into dense hubs or “clusters” near specific gene loci to maximize their efficiency. Fluctuation spectroscopy is the only tool capable of measuring the exchange rates between these clusters and the surrounding nucleoplasm. This insight is unique because it explains how a cell can maintain high levels of gene expression even when the overall concentration of a transcription factor appears low, a phenomenon that previously baffled developmental biologists.

Real-World Applications in Developmental Biology and Immunology

The practical implementation of this technology has already yielded profound results in the study of the Drosophila Dorsal protein and its human counterpart, NF-κB. In embryonic development, the precise titration of these proteins is what tells one cell to become a muscle and another to become skin. By using fluctuation spectroscopy to map these gradients, scientists have been able to pinpoint the exact molecular concentrations required for healthy development. This serves as a benchmark for understanding how small deviations in protein mobility can lead to catastrophic birth defects or developmental failures.

In the realm of immunology, the technology is being used to dissect the signaling errors that drive chronic inflammatory diseases. Because NF-κB governs the body’s response to infection, its overactivation is a hallmark of conditions like rheumatoid arthritis and certain types of cancer. Fluctuation spectroscopy allows researchers to see exactly why the “off switch” for these proteins fails to engage. By identifying whether the problem lies in the speed of the protein or its inability to detach from the DNA, clinicians can begin to design drugs that target the kinetic behavior of the molecule rather than just its total volume.

Technical Hurdles and Modeling Constraints

Despite its power, fluctuation spectroscopy faces significant challenges, particularly regarding the high computational demand required to process millions of data points per second. Modeling the stochastic, or random, nature of protein-DNA interactions requires immense processing power and sophisticated algorithms that can separate meaningful signals from background noise. Moreover, maintaining cell viability during high-intensity imaging remains a delicate balancing act. While improvements have been made, the risk of altering the cell’s natural behavior through “light stress” is a constant concern for researchers seeking pure data.

To address these limitations, ongoing development is focused on refining the “free amount” variables within predictive equations. The goal is to simplify the math so that it can be used by broader research teams who may not have a background in advanced chemical engineering. There is also a push to reduce the complexity of the hardware required, moving away from specialized, custom-built rigs toward more accessible, commercialized units. This democratization of the technology is necessary if it is to become a standard tool in pharmaceutical development and clinical diagnostics.

Future Trajectory of Predictive Therapeutic Modeling

The trajectory of this technology points toward a future of “predictive understanding,” where the trial-and-error approach to medicine is replaced by exact molecular titration. We are moving toward a reality where a doctor could analyze a patient’s specific NF-κB dynamics and prescribe a drug dosage calculated to suppress a tumor without harming healthy immune function. This precision medicine approach relies entirely on the quantitative frameworks established by fluctuation spectroscopy, which provide the “rules of the road” for how these proteins interact with our DNA.

As these tools transition into the public scientific community, we expect to see a global database of protein mobility “signatures.” Such a resource would allow researchers to compare the kinetic behavior of proteins across different species and disease states, accelerating the discovery of new drug targets. The focus will likely shift from merely suppressing or activating a protein to “tuning” its mobility. By subtly altering how fast a protein moves or how long it stays bound to a gene, we could achieve therapeutic results with far fewer side effects than traditional inhibitors.

Assessment of Quantitative Frameworks in Molecular Biology

The evolution of fluctuation spectroscopy has effectively fundamentally shifted the focus of molecular biology from observing protein location to interpreting protein function. By treating the cell as a dynamic physical system, the researchers provided a global map that bridges the gap between the laboratory and the clinic. The evidence clearly indicated that the relationship between nuclear occupancy and DNA binding was not a simple 1:1 ratio, but a complex, threshold-driven process. This realization demanded a new era of modeling that accounted for protein clusters and non-linear dynamics, ensuring that our therapeutic strategies were built on a realistic foundation.

Ultimately, the transition toward these quantitative frameworks proved to be a necessary step for the field of precision medicine. The ability to distinguish between the various states of a transcription factor allowed for a much higher degree of control over gene expression patterns than was previously thought possible. As these methodologies matured, they provided the exact mathematical variables needed to simulate cellular responses to new drugs. The work concluded that by mastering the fluctuations of life at the molecular level, science moved significantly closer to treating complex diseases with the precision of an engineer.